In our previous post, we talked about our process of specifying MongoDB’s distributed transactions protocol and how it enabled novel analysis of its performance characteristics. In this follow-up, we talk about how the modularity of our specification also enabled us to check that the underlying storage engine implementation actually conforms to the abstract behavior defined in our formal specification. That is, we are able to formalize the interface boundary between the sharded transaction protocol and WiredTiger, the underlying key-value storage engine, and develop an automated way to generate tests for checking conformance between the semantics of the underlying storage engine layer and this abstract model.

As mentioned in the previous post, a deeper exploration of the concepts covered in this post is covered in our recently published VLDB ’25 paper, Design and Modular Verification of Distributed Transactions in MongoDB.

Modular, Model-Based Verification

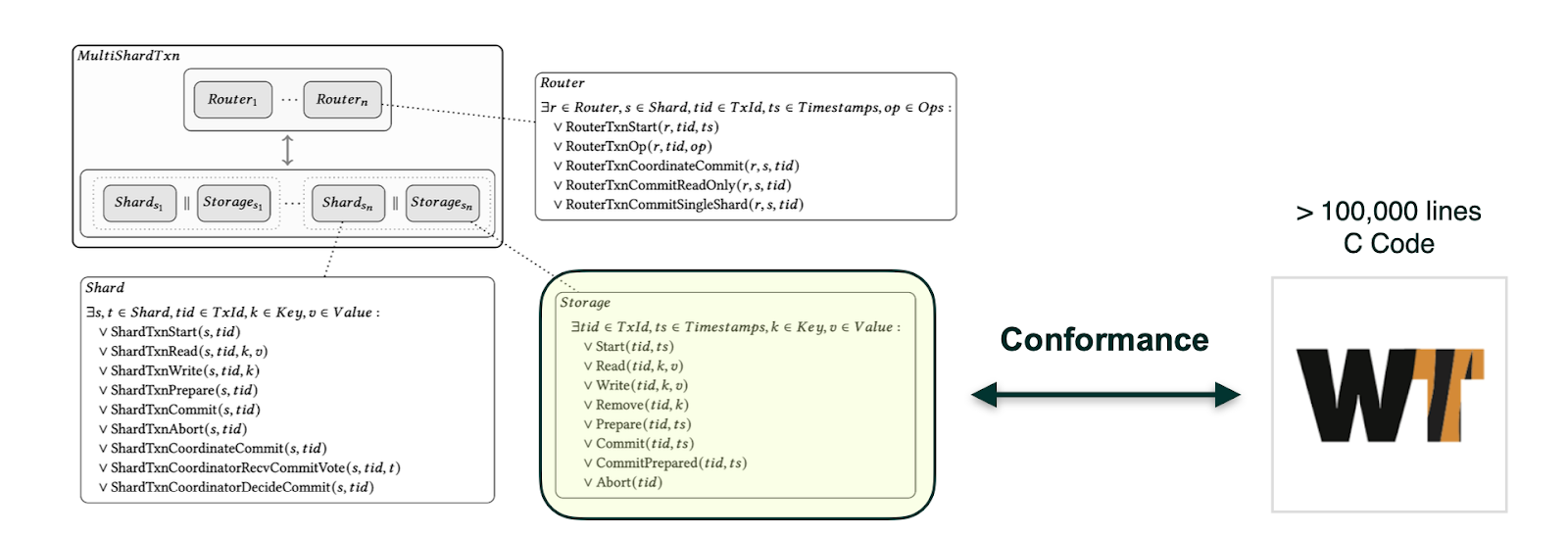

As discussed in Part 1, we had developed a TLA+ specification of MongoDB’s distributed transactions protocol in a compositional manner, describing the high level protocol behavior while also formalizing the boundary between the distributed aspect of the transactions protocol and the underlying single-node WiredTiger storage engine component. As mentioned, the distributed transactions protocol can be viewed as running atop the lower level storage layer.

When considering the correctness guarantees of the distributed transactions protocol, a subtle aspect is its interaction with the concurrency control mechanisms at each layer of the system. In particular, its interaction with the WiredTiger storage engine API, which has various timestamp-based operations implemented to adequately support the correct operation of the distributed transactions protocol.

Our formal model of the distributed transactions protocol was useful for checking its guarantees, but we also wanted to formalize the contract between this distributed protocol and the underlying storage engine, to check that this interface boundary, as defined in our abstract specification, was matched by the implementation. Leveraging the clean interface boundary in our specification, we developed a tool for automatically checking conformance between the WiredTiger implementation and this abstract storage specification (Storage) we defined. Our storage layer specification itself serves as a useful and precise definition of a subset of the WiredTiger storage engine semantics, but we can also use it for automatically generating test cases to check conformance of WiredTiger semantics to our spec.

Figure 1. Compositional specification of distributed transactions in TLA+ and storage engine component.

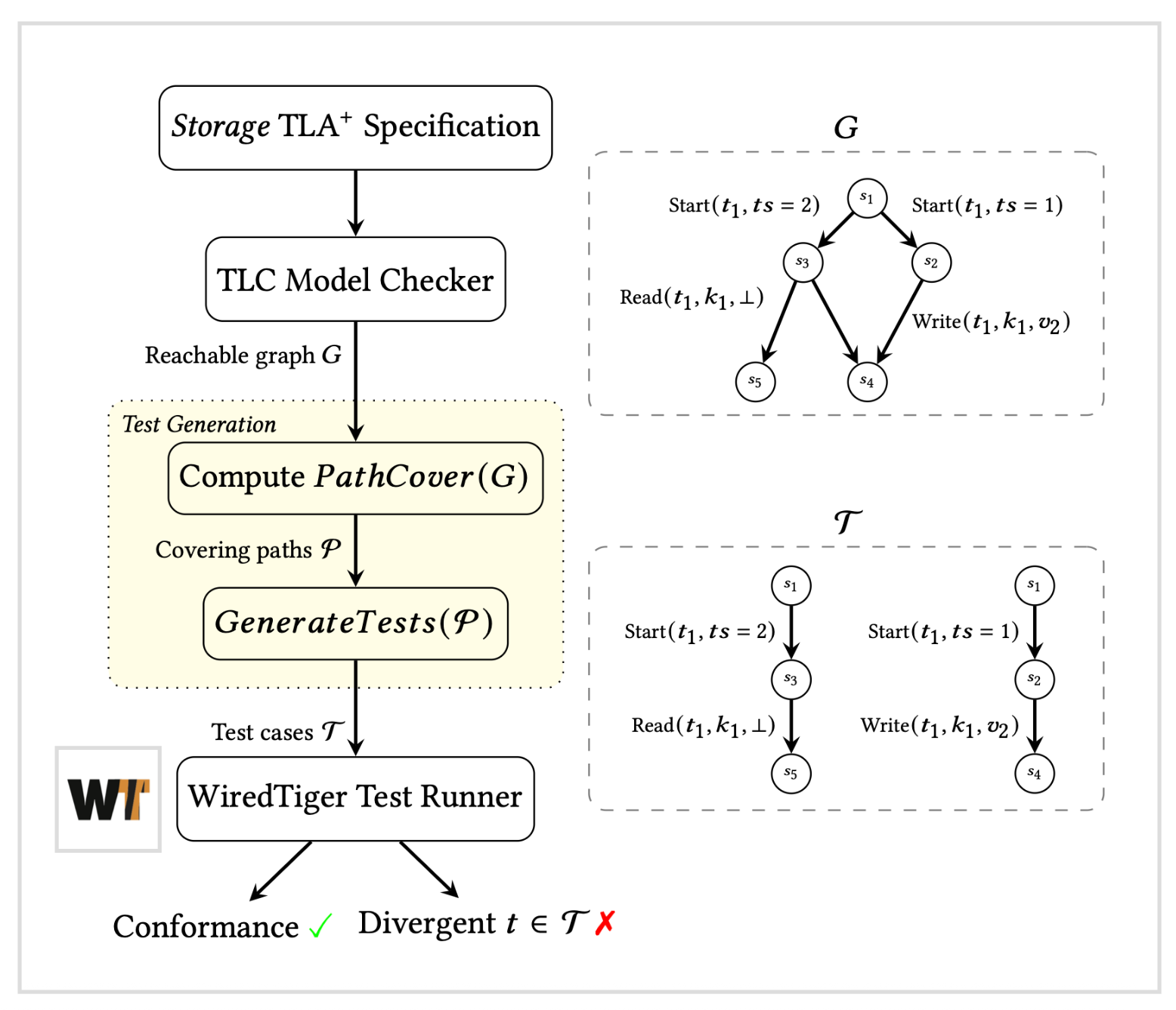

Path-based test case generation

To do this, we make use of a modified version of the TLC model checker to first generate the storage component specification’s complete graph of reachable states for finite parameters. We then compute a set of path coverings in this graph, where each path is then converted to an individual test case as a sequence of underlying storage engine API calls. This model-based verification technique allows us to automatically generate tens of thousands of individual test cases for WiredTiger which each check that the implementation matches the behavior defined in our abstract specification, which is also the contract relied on by the high level, distributed transactions protocol.

Figure 2. Model-based test case generation workflow.

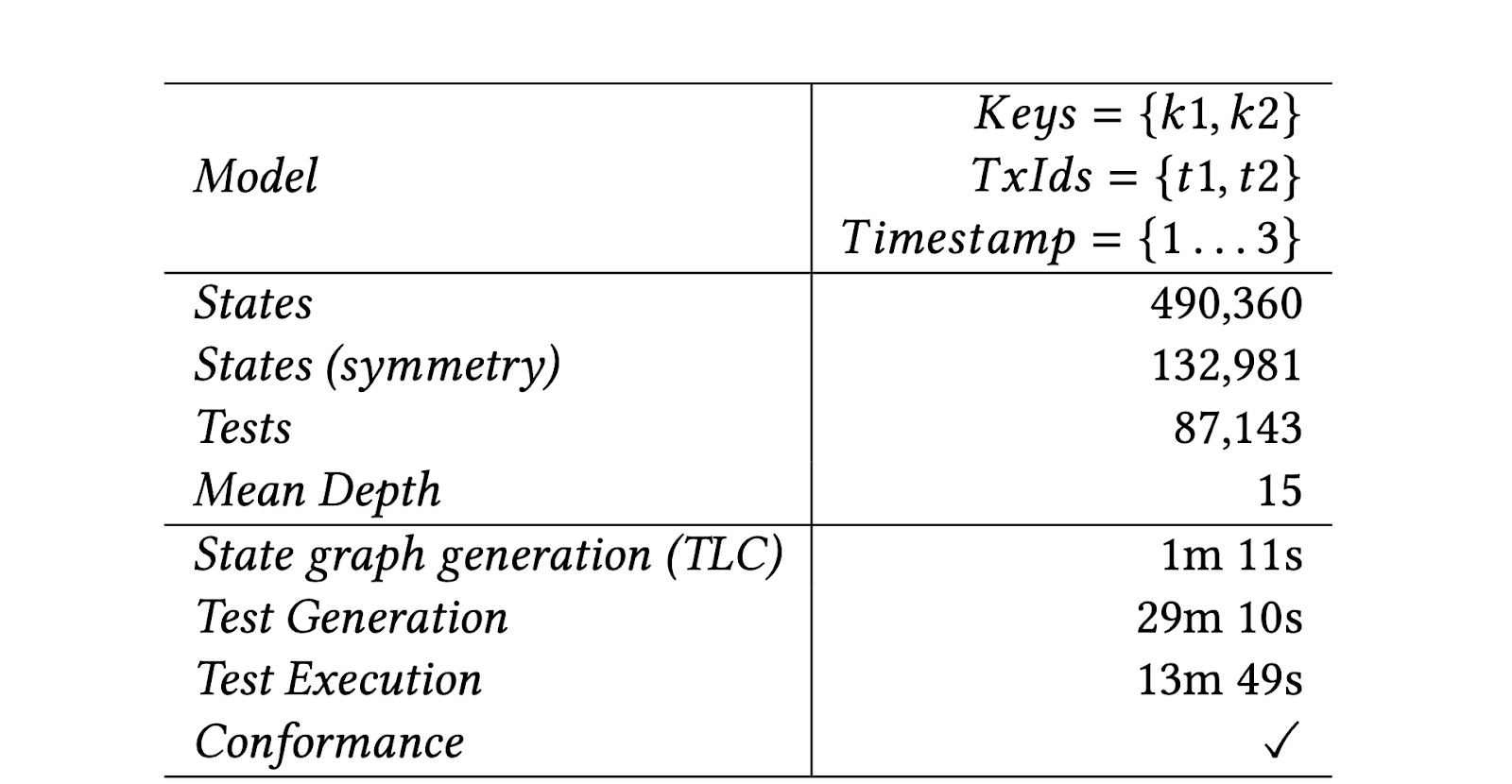

For a small, complete finite model (2 keys and 2 transactions), we are able to check conformance with WiredTiger with a generated suite of 87,143 tests, which are generated and executed against the storage engine in around ~40 minutes.

Figure 3. Test case generation statistics for the storage layer model.

Our current storage layer specification can be found in Github, and a link to an interactive, explorable version of it can also be found in Github. In the future, we hope to explore modeling of a more extensive subset of the WiredTiger API and explore alternate state space exploration strategies for generating tests, e.g., randomized path sampling, or other strategies that only require approximate coverage of the state space model. This approach bears similarity to other testing efforts we had explored in the past.

Conclusion

Modeling our distribution transactions protocol enabled verification of protocol correctness, and our use of modularity and compositional verification was instrumental in letting us reason about these high-level correctness properties while also being able to automatically check that our abstract storage interface correctly matches the semantics of the implementation. Similar approaches have been explored recently for model-based verification of distributed systems, as well as other approaches for testing systems using path-based test case generation techniques. In the future, we also remain optimistic about the role that LLMs can play in this type of verification workflow. For example, once such a testing harness for checking conformance is developed, having LLMs autonomously develop a model of the underlying system behavior allows for precise characterization of behavior, along with automated test suite generation capabilities.

You can find more details about this work in our recently published VLDB ‘25 paper, and in the associated Github repo that contains our specifications and code, as well as links to in-browser, explorable versions of our specifications.