MongoDB Atlas on Amazon Web Services (AWS)

MongoDB Atlas and AWS allow you to build enterprise-ready, intelligent apps with the flexibility, scalability, and high availability you need.

Turn AI into ROI with MongoDB and AWS

MongoDB Atlas on AWS complements Amazon Bedrock and Amazon Bedrock AgentCore, enabling enterprises to orchestrate intelligent agents that can safely act on live business data—with full context, across structured and unstructured sources, and under the strictest data governance.

Integrations

Data Analytics & AI/ML

Data Migration and App Modernization

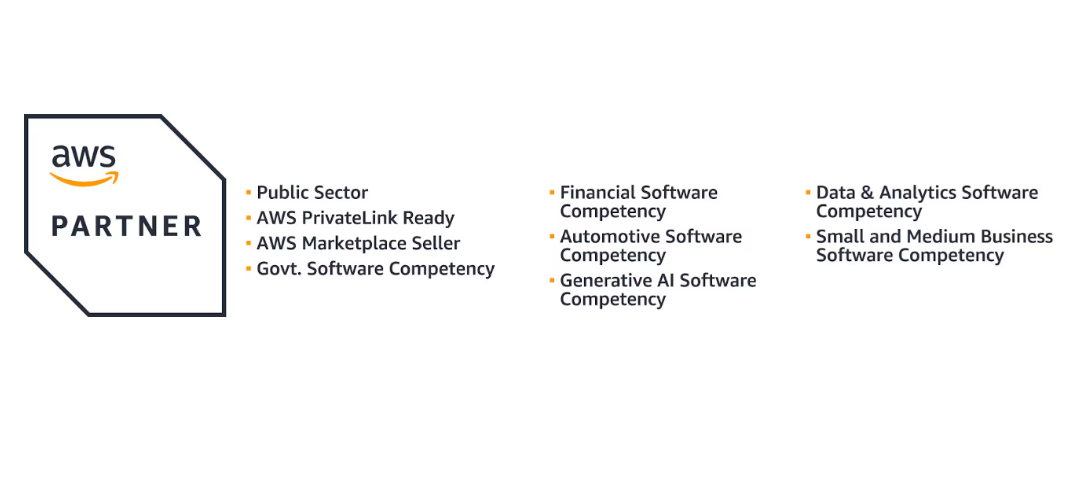

MongoDB and AWS Ecosystem

Build Together with AWS Integrations

MongoDB Atlas and AWS for Industries

Digitalisation Strategy Lead, Novo Nordisk

Digitalisation Strategy Lead, Novo Nordisk

Principal Engineer, Corporate Strategy, Verizon

Principal Database Engineer, Shutterfly

MongoDB and AWS for Startups

FAQ

MongoDB Atlas is on AWS Marketplace. With just one click, you can get started today with our Pay-As-You-Go option.

To deploy MongoDB on AWS, you can set up a new cluster on MongoDB Atlas, or live-migrate an existing MongoDB deployment using the Atlas Live Migration Service.

Once you have deployed your MongoDB cluster on AWS, either by using MongoDB Atlas or creating a self-managed cluster, use the cluster’s connection string to access either from the command line, or through a MongoDB driver in your language of choice.

MongoDB Atlas has a free tier that’s ideal for trying out the service as you get comfortable. If you’re using our fully-managed platform, you’re only billed for what you use. If you’re managing your own cluster, your AWS pricing for the resources it uses will apply.

MongoDB Atlas is the fully-managed document database service in the cloud, brought to you by the core team at MongoDB. Atlas helps organizations drive innovation at scale by providing a unified way to work with data that addresses operational, search, and analytical workloads across multiple application architectures, all while automatically handling the movement and integration of data for its users.

Visit the MongoDB Developer Community Forum - your hub for events, user groups, programs, and discussions. Connect, share, and build skills.