Over the course of our Building AI with MongoDB blog post series, we’ve seen many organizations using AI to shape product development and support. Examples we’ve profiled so far include:

Ventecon’s co-pilot helping product managers generate and refine specifications for new products

Cognigy’s conversational AI solutions empowering businesses to provide instant and personalized customer service in any language and for any channel

Kovai’s AI assistant helping users quickly discover information from product documentation and knowledge bases

In this roundup of the latest AI builders, I’ll focus on three more companies innovating across the product lifecycle. We’ll start with Zelta, which helps teams prioritize product roadmaps using live customer insights and sentiment. Then I'll move on to Crewmate, which connects products to engaged communities of users. We’ll wrap with Ada, which helps product companies like Meta and Verizon better support their customers through AI-driven automation.

_Spot_BS_ForestGreen-fqjzu3fhm3.png)

Check out our AI Learning Hub to learn more about building AI-powered apps with MongoDB.

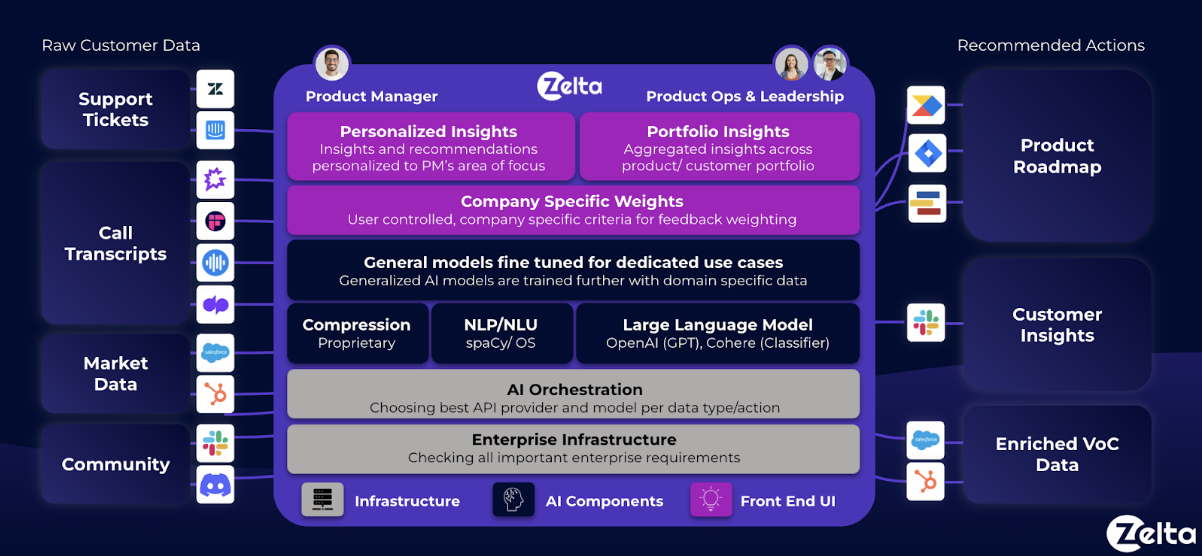

Zelta.AI: Prioritizing product roadmaps with data-driven customer analytics

Today's digital economy means customer feedback streams into the enterprise from a multitude of physical and digital touchpoints. For product managers, it can seem an impossible task to synthesize this feedback into themes and priorities that underpin a coherent development plan everyone in the business commits to. This is the problem Zelta.ai was founded to address.

Zelta uses generative AI to communicate insights on top of customer pain points found in companies’ most valuable asset: qualitative sources of customer feedback such as call transcripts and tickets, pulling directly from platforms like Gong, Zoom, Fireflies, Zendesk, Jira, Intercom, among others.

The company’s engineering team uses a combination of fine-tuned OpenAI GPT-4, Cohere, and Anthropic models to extract, classify, and encode source data into trends and sentiment around specific topics and features. MongoDB Atlas is used as the data storage layer for source metadata and model outputs.

“The flexibility MongoDB provides us has been unbelievable,” says Mick Cunningham, CTO and Co-Founder at Zelta AI. “My development team can constantly experiment with new features, just adding fields and evolving the data model as needed without any of the expensive schema migration pains imposed by relational databases.”

Cunningham goes on to say, “We also make heavy use of the MongoDB aggregation pipeline for application-driven intelligence. Without having to ETL data out of MongoDB, we can analyze data in place to provide customers with real-time dashboards and reporting of trends in product feedback. This helps them make product decisions faster, making our service more valuable to them.”

Looking forward, Zelta plans on creating its own custom models, and MongoDB will prove invaluable as a source of labeled data for supervised model training. Zelta is a member of the MongoDB AI Innovators program, taking advantage of free Atlas credits, access to technical support, and exposure to the wider MongoDB community.

Crewmate: Helping brands connect with their communities

In the digital economy, brands can spend millions of dollars growing online communities populated with highly engaged users of their products and services. However many of the tools used for building communities are third-party solutions that abstract away a brand’s visibility into user engagement. This is an issue Crewmate is working to address.

Crewmate is a no-code builder for embedded AI-powered communities. The company’s builder provides customizable communities for brands to deploy directly onto their websites. Crewmate is already used today across companies in consumer packaged goods (CPG), B2B SaaS, gaming, Web3, and more.

Crewmate starts by scraping a brand's website, along with open job postings and customer data from CRM systems. Scraped data is stored in its MongoDB Atlas database running on Google Cloud. An Atlas Trigger then calls OpenAI’s ada-002 embedding model, storing and indexing the vectorized encodings into Atlas Vector Search. An event-driven pipeline keeps the embeddings fresh by firing the Atlas Trigger as soon as new website data is inserted into the MongoDB database.

Using context-aware semantic search powered by Atlas Vector Search, users hitting and browsing the community pages on a brand’s website are automatically served relevant content. This includes posts from social media feeds, forum discussions, job postings, special offers, and more.

“I’ve used MongoDB in past projects and knew that its flexible document schema would allow me to store data of any structure. This is particularly important when ingesting many different types of data from my clients’ websites,” says Raj Thaker, CTO and Co-Founder of Crewmate.

“The introduction of Atlas Vector Search and the Building Generative AI Applications tutorial gave me a fast, ready-made blueprint that brings together a database for source data, vector search for AI-powered semantic search, and reactive, real-time data pipelines to keep everything updated, all in a single platform with a single copy of the data and a unified developer API. This keeps my engineering team productive and my tech stack streamlined. Atlas also provides integrations with the fast-evolving AI ecosystem. So while today I’m using OpenAI models, I have the flexibility to easily integrate with other models, such as Llama, in the future.”

Thaker goes on to say, “One of Crewmate’s major value creations is the insights brands can extract. Using the powerful and expressive MongoDB Query API I can process, aggregate, and analyze user engagement data so that brands can track community outreach efforts and conversions. They can generate this intelligence directly from their app data stored in MongoDB, avoiding the need to ETL it out into a separate data warehouse or data lake."

Like Zelta, Crewmate is also part of MongoDB’s AI Innovators program.

Ada: Revolutionizing customer service with AI-powered automations built on MongoDB Atlas

Founded in 2016, Ada has become a leader in automating complex service interactions across any channel and modality. The company has raised close to $200 million, has 300 employees, and counts Meta, Verizon, and AT&T among its 300 customers.

Mike Gozzo, Ada’s Chief Product and Technology Officer was interviewed at a recent MongoDB developer conference where he discussed the evolution of AI for customer service and the role MongoDB plays in Ada’s AI stack.

Gozzo makes the point that while bots for customer service aren’t new, the huge advancements in transformer models and LLMs coupled with reinforcement learning from human feedback (RLHF) have made these assistants far more capable. Rather than just search for information, they can use advanced reasoning to solve customer problems.

Asked why Ada selected MongoDB Atlas to underpin all its products, Gozzo says, “Having the flexibility and ability to just pivot on a dime was really important. We saw that as we advanced the company and brought in new channels and new modalities, having one data store that can be easily extended without crazy migrations and that would really support our needs was absolutely clear from MongoDB. We’ve always stayed the path with Atlas because the performance is there, the support from the team is great, and we believe in having less dependency on one central cloud vendor that MongoDB allows.”

Gozzo goes on to say, “Using MongoDB means we’re not limited in how we source data if we want to build something new. We can query unstructured data and use it to train other models. We use generative AI effortlessly throughout our product stack to automate queries and provide support that goes beyond just answering multi-step queries. With MongoDB, we’re able to ship new products in just a few months.”

Going forward, Ada is starting to use MongoDB Change Streams to build a distributed event processing system that powers bots and analytics. It is also exploring Queryable Encryption, which helps advance AI training while keeping conversations private.

As discussed in his Voice of the Customer interview with Amazon Web Services (AWS), Gozzo talks about how velocity drives all product development at Ada. Velocity is measured both in terms of how quickly the company can ship products and features, and how quickly they can learn and iterate. Running MongoDB Atlas on AWS alongside serverless Lambda functions and LLMs through Amazon Bedrock means Ada delivers its applications scalably with repeatability and high performance.

Getting started

Check out our library of AI case studies to see the range of applications developers are building with MongoDB. Head over to our quick-start guide to get started with Atlas Vector Search today.