There has been a lot of recent reporting on the desire to regulate AI. But very little has been made of how AI itself can assist with regulatory compliance. In our latest round-up of qualifiers for the MongoDB AI Innovators Program, we feature a company who are doing just that in one of the world’s most heavily regulated industries.

Helping comply with regulations is just one way AI can assist us. We hear a lot about copilots coaching developers to write higher-quality code faster. But this isn’t the only domain where AI-powered copilots can shine. To round out this blog post, we provide two additional examples – a copilot for product managers that helps them define better specifications and a copilot for sales teams to help them better engage customers.

We launched the MongoDB AI Innovators Program back in June this year to help companies like these “build the next big thing” in AI. Whether a freshly minted start-up or an established enterprise, you can benefit from the program, so go ahead and sign up. In the meantime, let's explore how innovators are using MongoDB for use cases as diverse as compliance to copilots.

Check out our AI Learning Hub to learn more about building AI-powered apps with MongoDB.

AI-powered compliance for real-time healthcare data

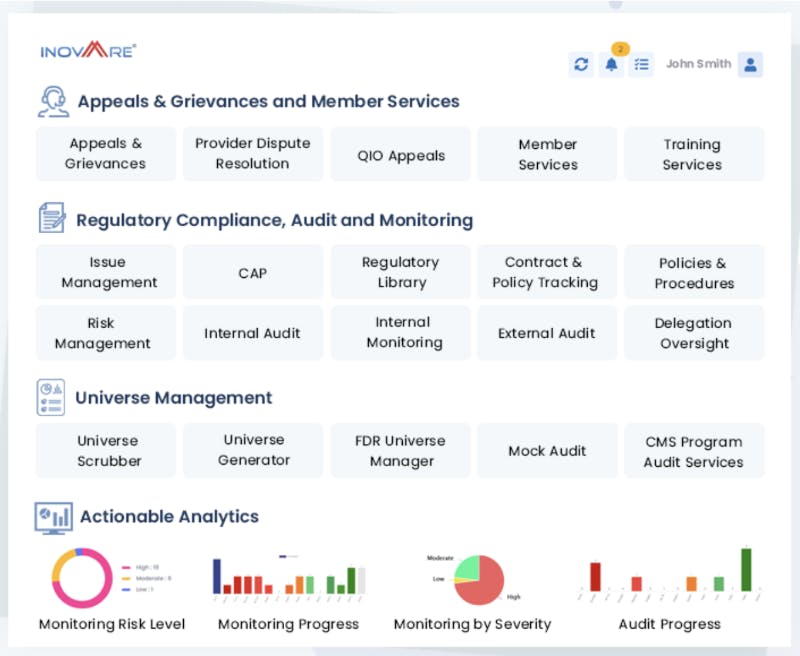

Inovaare transforms complex compliance processes by designing configurable AI-driven automation solutions. These solutions help healthcare organizations collect real-time data across internal and external departments, creating one compliance management system. Founded 10 years ago and now with 250 employees, Inovaare's comprehensive suite of HIPAA-compliant software solutions enables healthcare organizations across the Americas to efficiently meet their unique business and regulatory requirements. They can sustain audit readiness, reduce non-compliance risks, and lower overall operating costs.

Inovaare uses classic and generative AI models to power a range of services. Custom models are built with PyTorch while LLMs are built with transformers from Hugging Face and developed and orchestrated with LangChain. MongoDB Atlas powers the models’ underlying data layer.

Models are used for document classification along with information extraction and enrichment. Healthcare professionals can work with this data in multiple ways including semantic search and the company’s Question-Answering chatbot. A standalone vector database was originally used to store and retrieve each document’s vector embeddings as part of in-context model prompting. Now Inovaare has migrated to Atlas Vector Search. This migration helps the company’s developers build faster through tight vector integration with the transactional, analytical, and full-text search data services provided by the MongoDB Atlas platform.

Inovaare also uses AI agents to orchestrate complex workflows across multiple healthcare business processes, with data collected from each process stored in the MongoDB Atlas database. Business users can visualize the latest state of healthcare data with natural language questions translated by LLMs and sent to Atlas Charts for dashboarding.

Inovaare selected MongoDB because its flexible document data model enables the company's developers to store and query data of any structure. This coupled with Atlas’ HIPAA compliance, end-to-end data encryption, and the freedom to run on any cloud – supporting almost any application workload – helps the company innovate and release with higher velocity and lower cost than having to stitch together an assortment of disparate databases and search engines.

Going forward, Inovaare plans to expand into other regions and compliance use cases. As part of MongoDB’s AI Innovators Program, the company’s engineers get to work with MongoDB specialists at every stage of their journey.

The AI copilot for product managers

The ultimate goal of any venture is to create and deliver meaningful value while achieving product-market fit. Ventecon's AI Copilot supports product managers in their mission to craft market-leading products and solutions that contribute to a better future for all.

Hundreds of bots currently crawl the Internet, identifying and processing over 1,000,000 pieces of content every day. This content includes details on product offerings, features, user stories, reviews, scenarios, acceptance criteria, and issues through market research data from target industries. Processed data is stored in MongoDB. Here it is used by Ventecon’s proprietary NLP models to assist product managers in generating and refining product specifications directly within an AI-powered virtual space.

Patrick Beckedorf, co-founder of Ventecon says “Product data is highly context-specific and so we have to pre-train foundation models with specific product management goals, fine-tune with contextual product data, include context over time, and keep it up to date. In doing so, every product manager gets a digital, highly contextualized expert buddy.”

Currently, vector embeddings from the product data stored in MongoDB are indexed and queried in a standalone vector database. As Beckedorf says, the engineering team is now exploring a more integrated approach. “The complexity of keeping vector embeddings synchronized across both source and vector databases, coupled with the overhead of running the vector store ties up engineering resources and may affect indexing and search performance. A solid architecture therefore provides opportunities to process and provide new knowledge very fast, i.e. in Retrieval-Augmented Generation (RAG), while bottlenecks in the architecture may introduce risks, especially at scale. This is why we are evaluating Atlas Vector Search to bring source data and vectors together in a single data layer. We can use Atlas Triggers to call our embedding models as soon as new data is inserted into the MongoDB database. That means we can have those embeddings back in MongoDB and available for querying almost immediately.”

For Beckedorf, the collaboration with data pioneers and the co-creation opportunities with MongoDB are the most valuable aspects of the AI Innovators Program.

AI sales email coaching: 2x reply rates in half the time

Lavender is an AI sales email coach. It assists users in real-time to write better emails faster. Sales teams who use Lavender report they’re able to write emails in less time and receive twice as many replies. The tool uses generative AI to help compose emails. It personalizes introductions for each recipient and scores each email as it is being written to identify anything that hurts the chances of a reply. Response rates are tracked so that teams can monitor progress and continuously improve performance using data-backed insights.

OpenAI’s GPT LLMs along with ChatGPT collaboratively generate email copy with the user. The output is then analyzed and scored through a complex set of business logic layers built by Lavender’s data science team, which yield industry-leading, high-quality emails. Together, the custom and generative models help write subject lines, remove jargon and fix grammar, simplify unwieldy sentences, and optimize formatting for mobile devices. They can also retrieve the recipient’s (and their company’s) latest publicly posted information to help personalize and enrich outreach.

MongoDB Atlas running on Google Cloud backs the platform. Lavender’s engineers selected MongoDB because of the flexibility of its document data model. They can add fields on-demand without lengthy schema migrations and can store data of any structure. This includes structured data such as user profiles and response tracking metrics through to semi and unstructured email copy and associated ML-generated scores. The team is now exploring Atlas Vector Search to further augment LLM outputs by retrieving similar emails that have performed well. Storing, syncing, and querying vector embeddings right alongside application data will help the company’s engineers build new features faster while reducing technology sprawl.

What's next?

We have more places left in our AI Innovators Program, but they are filling up fast, so sign up directly on the program’s web page. We are accepting applications from a diverse range of AI use cases. To get a flavor of that diversity, take a look at our blog post announcing the first program qualifiers who are building AI with MongoDB. You’ll see use cases that take AI to the network edge for computer vision and Augmented Reality (AR), risk modeling for public safety, and predictive maintenance paired with Question-Answering systems for maritime operators.

Head over to our quick-start guide to get started with Atlas Vector Search today.

Also, check out our MongoDB for Artificial Intelligence resources page for the latest best practices that get you started in turning your idea into AI-driven reality.