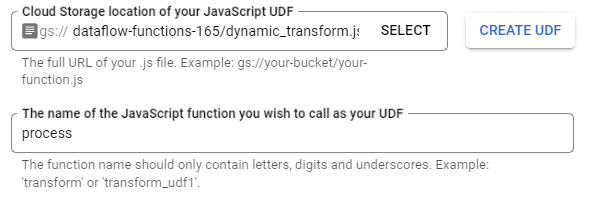

We are trying load our current data in mongoDB into bigquery on a scheduled basis. And currently trying to use google cloud’s dataflow service working with the MongoDB to Bigquery (batch) template with some UDF. Unfortunately I cant seem to get the UDF to work for this job using the cloud console. The job runs runs perfectly and correctly loads the entire data into bigquery but the UDF function is not applied.

Here is an example UDF I have tried below, this should just add a new string field to the data but that does not work either.

/**

* User-defined function (UDF) to transform elements as part of a Dataflow

* template job.

*

* @param {string} inJson input JSON message (stringified)

* @return {?string} outJson output JSON message (stringified)

*/

function process(inJson) {

const obj = JSON.parse(inJson);

obj.udftest = "udftest";

return JSON.stringify(obj);

}

What am I doing wrong? I have tried to just add an extra field as in the code above but that doesn’t work either, it just loads the flattened data if useroption is FLATTEN and the normal json format if useroption is NONE. The UDF function is totally disregarded and pipeline works successfully without throwing any error.