I am using compass v1.36.0 . most of the time getting errors like “Operation exceeded the time limit. Please try increasing the maxTimeMS for the query in the expanded filter options.”

Actually, I have three collection

studenteachers collection

[{

"user_id": "313031393137303000",

"studen_Ind": "Y",

"client_Id": "LA0XDA",

"clientName": "LA0XDA",

"country_id": "CRYVN",

"country_name": "VIETNAM",

"userClient_id": "101908617",

"user_status": "A",

"geo": "ASEAN",

"programName": "X",

"site_id": "W26872",

"site_name": "VNXG02",

"state_id": "VN_REGION",

"subprogram_name": "LAXSub",

"supervisor_email": "minhn@gma.com",

"supervisor_id": "101483574",

"teacher_IND": "N"

}]

in the studentteacher collection

- we have more than 4laks records,

- student, teachers, and their respective site and client details

- along with we have user_status(A- active , I- inactive) details

- We can group the student or teachers client-wise / site-wise/geo-wise/country-wise.

AccessSettings Collection

{"_id":"33353453534",

"client_Id":"AA0VIIRP",

"client_name":"AA0VIIRP",

"geo":"NORTH_AMERICA",

"country_Id":"CRYUS",

"country_Name":"US",

"site_id":"834",

"site_name":"USVIIO-00",

"status":"A"}

in the AccessSettings collection, we have account and site details [ status-: A - mean Active clients under the site, status-I mean inactive clients under the site…]

We can get all the access enabled client and their site details from the Access settings collection.

Attendance Collection

{

"_id": "64071b221078082370f5c4e8",

"attendance_date": "2023-03-07T00:00:00.000Z",

"user_id": "313031383337333000",

"geo": "ASEAN",

"programName": "X",

"site_id": "W26872",

"site_name": "VNXG02",

"state_id": "VN_REGION",

"studen_Ind": "Y",

"client_Id": "LA0XDA",

"clientName": "LA0XDA",

"country_id": "CRYVN",

"country_name": "VIETNAM",

"userClient_id": "101908617"

}

we can expect the minimum record count for this collection is more than 50lakhs per month.

we need the output like below

{Date :

client_name :

geo :

country :

site :

ActiveStudentCount :

ActiveTeacherCount :

StudentAttendanceCount :

TeacherAttendanceCount :

userAttendanceCount:

studAttendanceCount:

teacherAttendanceCount:

}

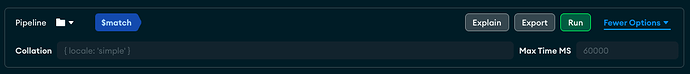

Query :

[

{ $group: {

_id: {

client: "$clientName",

clientId: "$clientId",

geo: "$geo",

country: "$country_name",

site: "$site_name",

studentInd: "$studen_Ind",

teacherInd: "$teacher_Ind",

userid: "$user_id",

attendanceDate: "$attendance_date",

},attendancecount: {$sum: 1,}}},

{$group: {

_id: {

client: "$_id.client",

geo: "$_id.geo",

country: "$_id.country",

site: "$_id.site",

attendanceDate: "$_id.attendanceDate",

},

userAttendanceCount: {$sum: "$attendancecount",},

studAttendanceCount: {$sum: {$cond: [{$eq: ["_id.studentInd", "Y"],},1,0,]}},

teacherAttendanceCount: {$sum: {$cond: [{$eq: ["_id.teacherInd", "Y"],},1,0,]}}

}},

{$lookup: {

from: "accessSettings",

let: {site: "$_id.site",client: "$_id.client"},

pipeline: [

{$match: {$expr: {$and:[{$eq: ["$site_name", "$$site"]},

{$eq: ["$client_name","$$client"]},

{$eq: ["$status", "A"]}]

}}}],

as: "result",}},

{

$lookup: {

from: "studentTeacher",

let: {site: "$_id.site",client: "$_id.client"},

pipeline: [{$match: {$expr: {$and: [{$eq: ["$site_name", "$$site"]},

{$eq: ["$clientName","$$client"]}]}}}],

as: "res"}},

{$unwind: {path: "$res",preserveNullAndEmptyArrays: true}},

{$group: {_id:

{client: "$_id.client",geo: "$_id.geo",country: "$_id.country",state: "$_id.state",site:

"$_id.site",attendanceDate: "$_id.attendanceDate"},

userAttendanceCount: {$max: "$userAttendanceCount"},

studAttendanceCount: {$max: "$studAttendanceCount"},

teacherAttendanceCount: {$max: "$teacherAttendanceCount"},

ActiveuserCount: {$sum: 1,},

ActiveAgentCount: {$sum: {$cond: [{$eq: ["$res.studen_Ind", "Y"]},1,0]}},

ActiveSupervisorCount: {$sum: {$cond: [{$eq: ["$res.teacher_IND", "Y"]},1,0]}}}

},

]

maximum time getting the “Operation exceeded the time limit.” error, please verify the query and suggest a way to improve the performance. Please help me with this