Hi everyone!

I’ve identified a memory leak in an application I’m working on, which causes it to crash after a while due to being out of memory. Fortunately we’re running it on Kubernetes, so the other replicas and an automatic reboot of the crashed pod keep the software running without downtime. I’m worried about potential data loss or data corruption though.

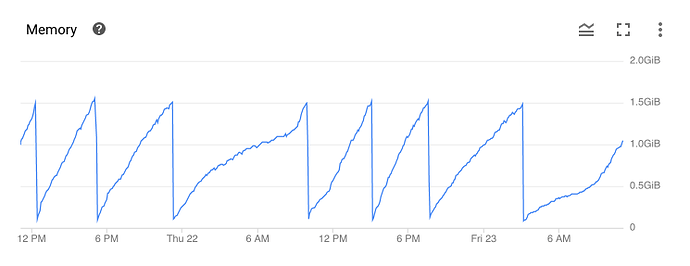

The memory leak is seemingly tied to HTTP requests. According to this graph, memory usage increases more rapidly during the day when most of our users are active:

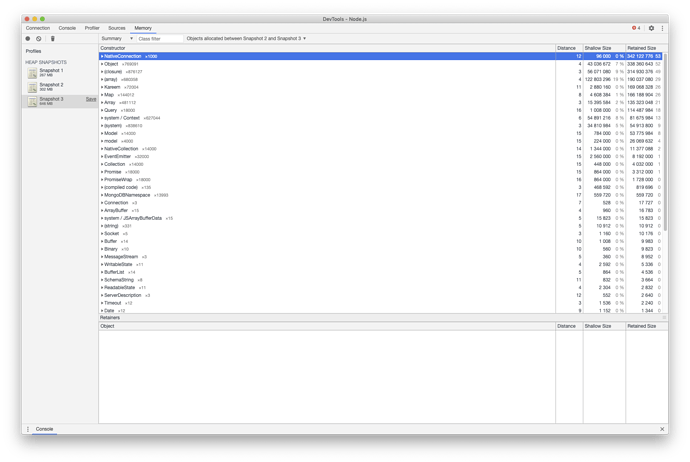

In order to find the memory leak, I’ve attached the Chrome debugger to an instance of the application running on localhost. I made a heap snapshot and then I ran a script to trigger 1000 HTTP requests. Afterwards I triggered a manual garbage collection and made another heap snapshot. Then I opened a comparison view between the two snapshots:

As can be seen in the screenshot, the increase of memory usage has been mainly caused by 1000 new NativeConnection objects. They remain in memory and thus accumulate over time.

I think this is caused by our architecture. We’re using the following stack:

- Node 10.22.0

- Express 4.17.1

- MongoDB 4.0.20 (hosted by MongoDB Atlas)

- Mongoose 5.10.3

Depending on the request origin, we need to connect to a different database name. To achieve that we added some Express middleware in between that switches between databases, like so:

- On boot we connect to the database cluster with

mongoose.createConnection(uri, options). This sets up a connection pool. - On every HTTP request we obtain a connection to the right database with

connection.useDb(dbName). - After obtaining the connection we register the Mongoose models with

connection.model(modelName, modelSchema).

Do you have any ideas on how we can fix the memory leak, while still being able to switch between databases? Thanks in advance!