Hello there!

I have a string field which uses for autocompletion functionality. And wondering how to implement Autocomplete for values that contains special characters/punctuation, e.g. > 1’ 1/4" - 8". Should I replace or somehow escape these characters ( ', /, " ) when making an autocomplete query? Where I can find information how does the analyzer parse those characters?

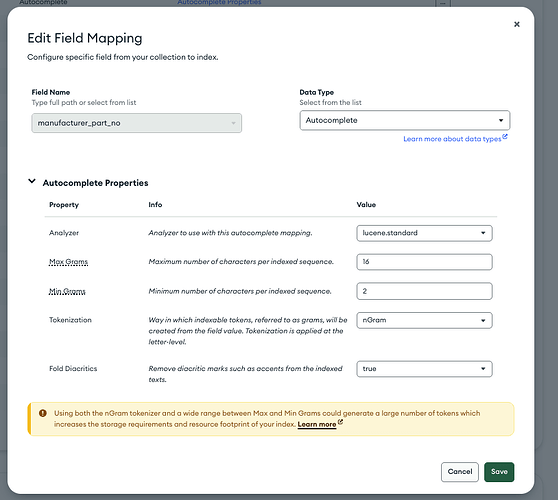

My search-index example

{

"analyzer": "lucene.keyword",

"mappings": {

"dynamic": true,

"fields": {

"name": [

{

"analyzer": "lucene.standard",

"multi": {

"keyword": {

"analyzer": "lucene.keyword",

"type": "string"

}

},

"type": "string"

},

{

"minGrams": 3,

"tokenization": "edgeGram",

"type": "autocomplete"

}

],

}

}

}