Generative AI (GenAI) has been evolving at a rapid pace. With the introduction of OpenAI’s ChatGPT powered by GPT-3.5 reaching 100 million monthly active users in just two months, other major large language models (LLMs) have followed in ChatGPT's footsteps. Cohere’s LLM supports more than 100 languages and is now available on their AI platform, Google’s Med-PaLM was designed to provide high-quality answers to medical questions, OpenAI introduced GPT-4 (a 40% improvement over GPT-3.5), Microsoft integrated GPT-4 within its Office 365 suite, and Amazon introduced Bedrock, a fully managed service that makes foundation models available via API. These are just a few advancements in the Generative AI market, and a lot of enterprises and startups are adopting AI tools to solve their specific use cases. The developer community and open-source models are also growing as companies adapt to the new technology paradigm shift in the market.

Building intelligent GenAI applications requires flexibility with data. One of the core requirements is data governance, which will be discussed in this blog. Data governance is a broad term encompassing everything you do to ensure data is secure, private, accurate, available, and usable. It includes the processes, policies, measures, technology, tools, and controls around the data lifecycle. When organizations build applications and transition to a production environment, they often deal with personal data (PII) or commercially sensitive data, such as data related to intellectual property, and want to make sure all the controls are in place.

When organizations are looking to build GenAI-powered apps, there are a few capabilities that are required to deliver intelligent and modern app experiences:

Handle data for both operational and analytical workloads

A data platform that is highly scalable and performant

An expressive query API that can work with any kind of data type

Tight integrations with established and open-source LLMs

Native vector search capabilities like embeddings that enable semantic search and retrieval-augmented generation (RAG)

To learn more about the MongoDB modern database and how to embed generative AI applications with MongoDB, you can refer to this paper. This blog goes into detail on the security controls of MongoDB Atlas that modern AI applications need.

Check out our AI Learning Hub to learn more about building AI-powered apps with MongoDB.

What are some of the potential security risks while building GenAI applications?

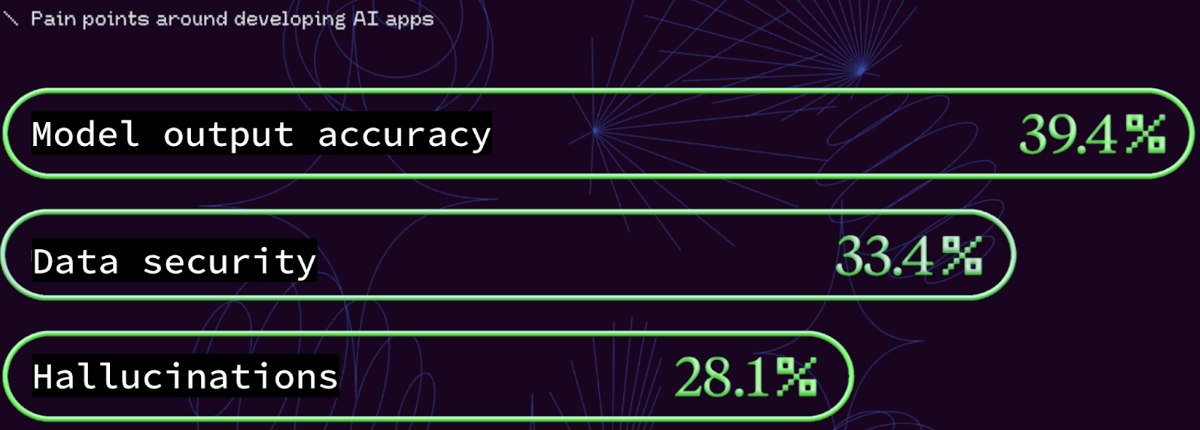

As per the recent State of AI, 2023 report by Retool, data security and data accuracy are the top two pain points when developing AI applications. In the survey, a third of respondents cited data security as a primary pain point, and it increases almost linearly with company size (refer to the MongoDB blog for more details.)

While organizations leverage AI technology to improve their businesses, they should be wary of the potential risks. The unintended consequences of generative AI are more likely to expose the above risks as companies approach experimenting with various models and AI tools. Although organizations follow best practices to be deliberate and structured in developing production-ready generative AI applications, they need to have strict security controls in place to alleviate the key security considerations that AI applications pose.

Here are some considerations for securing AI applications/systems

Data security and privacy: Generative AI foundation models rely on large amounts of data to both train against and generate new content. If the training data or data available for the RAG process (retrieval augmented generation) includes personal or confidential data, that data may turn up in outputs in unpredictable ways. Hence it is very important to have strong governance and controls in place so that confidential data does not wind up in outputs.

Intellectual property infringement: Organizations need to avoid the unauthorized use, duplication, or sale of works legally regarded as protected intellectual property. They also have to make sure to train the AI models so the output does not resemble existing works and hence infringe the copyrights of the original. Since this is still a new area for AI systems, the laws are evolving.

Regulatory compliance: AI applications have to comply with industry standards and policies like HIPAA in healthcare, PCI in finance, GDPR for data protection for EU citizens, CCPA, and more.

Explainability: AI systems and algorithms are sometimes perceived as opaque, making non-deterministic decisions. Explainability is the concept that a machine learning model and its output can be explained in a way that makes sense to a human being at an acceptable level and provides repeatable outputs given the same inputs. This is crucial for building trust and accountability in AI applications, especially in domains like healthcare, finance, and security.

AI Hallucinations: AI models may generate inaccurate information, also known as hallucinations. These are often caused by limitations in training data and algorithms. Hallucinations can result in regulatory violations in industries like finance, healthcare, and insurance, and, in the case of individuals, could be reputationally damaging or even defamatory.

These are just some of the considerations when using AI tools and systems. There are additional concerns when it comes to physical security, organizational measures, technical controls for the workforce — both internal and partners — and monitoring and auditing of the systems. By addressing each of these critical issues, organizations can ensure the AI applications they roll out to production are compliant and secure.

Let us look at how MongoDB’s modern database can help with some of these considerations around security controls and measures.

How does MongoDB address the security risks and data governance around GenAI?

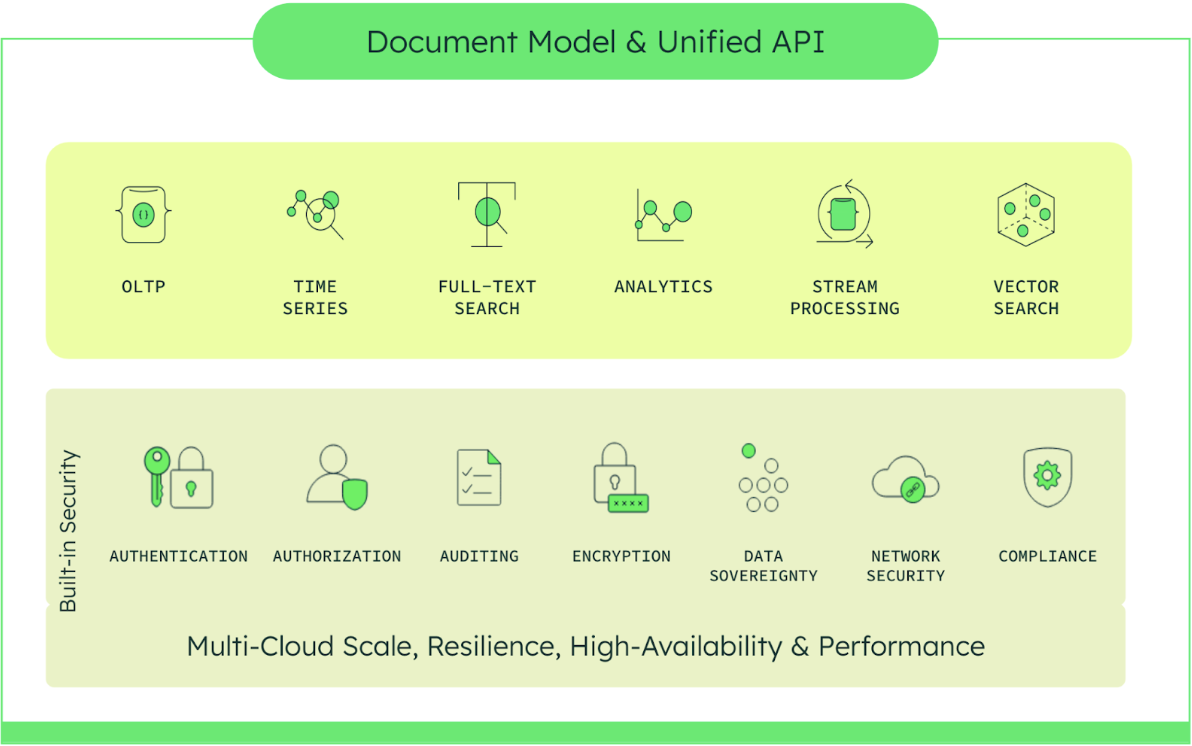

MongoDB's modern database, built on MongoDB Atlas, unifies operational, analytical, and generative AI data services to streamline building intelligent applications. At the core of MongoDB Atlas is its flexible document data model and developer-native query API. Together, they enable developers to dramatically accelerate the speed of innovation, outpace competitors, and capitalize on new market opportunities presented by GenAI.

Developers and data science teams around the world are innovating with AI-powered applications on top of MongoDB. They span multiple use cases in various industry sectors and rely on the security controls MongoDB Atlas provides. Here is the library of sample case studies, white papers, and other resources about how MongoDB is helping customers build AI-powered applications.

MongoDB Atlas offers built-in security controls for all organizational data. The data can be application data as well as vector embeddings and their associated metadata — giving holistic protection of all the data you are using for GenAI-powered applications. Atlas enables enterprise-grade features to integrate with your existing security protocols and compliance standards. In addition, Atlas simplifies deploying and managing your databases while offering the versatility for developers to build resilient applications. MongoDB allows easy integration for security administrators with external systems, while developers can focus on their business requirements. Along with key security features being enabled by default, MongoDB Atlas is designed with security controls that meet enterprise security requirements. Here's how these controls help organizations build their AI applications on MongoDB’s platform and meet the considerations we discussed above:

Data security

MongoDB has access and authentication controls enabled by default. Customers can authenticate to the platform using mechanisms including SCRAM, x.509 certificates, LDAP, passwordless authentication with AWS-IAM, and OpenID Connect. MongoDB also provides role-based access control (RBAC) to determine the user's access privilege to various resources within the platform. Data scientists and developers building AI applications can leverage any of these access controls to fine-tune user access and privileges while training or prompting their AI models. Organizations can implement access control mechanisms to restrict access to the data to only authorized personnel.

End-to-end encryption of data: MongoDB’s data encryption tools offer robust features to protect your data while in transit (network), at rest (storage), and in use (memory and logs). Customers can use automatic encryption of key data fields like personally identifiable information (PII), protected health information (PHI), or any data deemed sensitive, ensuring data is encrypted throughout its lifecycle. Going beyond encryption at rest and in transit, MongoDB has released Queryable Encryption to encrypt data in use. Queryable Encryption enables an application to encrypt sensitive data from the client side, store the encrypted data in the MongoDB database, and run server-side queries on the encrypted data without having to decrypt it. Queryable Encryption is an excellent anonymization technique that makes sensitive data opaque. This technology can be leveraged when you are using company-specific data that contain confidential information from the MongoDB database for the RAG process and that data needs to be anonymized or when you are storing sensitive data in the database.

Regulatory compliance and data privacy

Many uses of generative AI are subject to existing laws and regulations that govern data privacy, intellectual property, and other related areas. New laws and regulations aimed specifically at AI are in the works around the world.

The MongoDB modern database undergoes independent verification of platform security, privacy, and compliance controls to help customers meet their regulatory and policy objectives, including the unique compliance needs of highly regulated industries and U.S. government agencies. Refer to the MongoDB Atlas Trust Center for our current certifications and assessments.

Regular security audits

Organizations should conduct regular security audits to identify potential vulnerabilities in their data security practices. This can help ensure that any security weaknesses are identified and addressed promptly. Audits help to identify and mitigate any risks and errors in your AI models and data, as well as ensure that you are compliant with regulations and standards. MongoDB offers granular auditing that provides a trail of how and what data was used and is designed to monitor and detect any unauthorized access to data.

What are additional best practices and considerations while working with AI models?

While it is essential to work with a trusted data platform, it is also important to prioritize security and data governance as discussed. In addition to data security, compliance, and data privacy as mentioned above, here are additional best practices and considerations.

Data quality

Monitor and assess the quality of input data to avoid biases in foundation models. Make sure that your training data is representative of the domain in which your model will be applied. If your model is expected to generalize to real-world scenarios, your training data or data made available for the RAG process should be monitored.Secure deployment

Use secure and encrypted channels for deploying foundation models. Implement robust authentication and authorization mechanisms to ensure that only authorized users and systems can access sensitive data and AI models. Enforce mechanisms to anonymize sensitive information to protect user privacy.Audit trails and monitoring

Maintain detailed audit trails and logs of model training, evaluation, and deployment activities. Implement continuous monitoring of both data inputs and model outputs for unexpected patterns or deviations.

MongoDB maintains audit trails and logs of all the data operations and data processing. Customers can use the audit logs for monitoring, troubleshooting, and security purposes, including intrusion detection. We utilize a combination of automated scanning, automated alerting, and human review to monitor the data.Secure data storage

Implement secure storage practices for both raw and processed data. Use encryption for data at rest and in transit as discussed above.

Encryption at-rest is turned on automatically on MongoDB servers. The encryption occurs transparently in the storage layer; i.e. all data files are fully encrypted from a filesystem perspective, and data only exists in an unencrypted state in memory and during transmission.

Conclusion

As generative AI tools grow in popularity, it matters more than ever how an organization understands and protects its data, and puts it to use — defining the roles, controls, processes, and policies for interacting with data. As modern enterprises use generative AI and LLMs to better serve customers and extract insights from the data, strong data governance becomes essential. By understanding the potential risks and carefully evaluating the platform capabilities the data is hosted on, organizations can confidently harness the power of these tools.

For more details on MongoDB’s trusted platform, refer to these links.