Retool has just published its first-ever State of AI report and it's well worth a read. Modeled on its massively popular State of Internal Tools report, the State of AI survey took the pulse of over 1,500 tech folks spanning software engineering, leadership, product managers, designers, and more drawn from a variety of industries. The survey’s purpose is to understand how these tech folk use and build with artificial intelligence (AI).

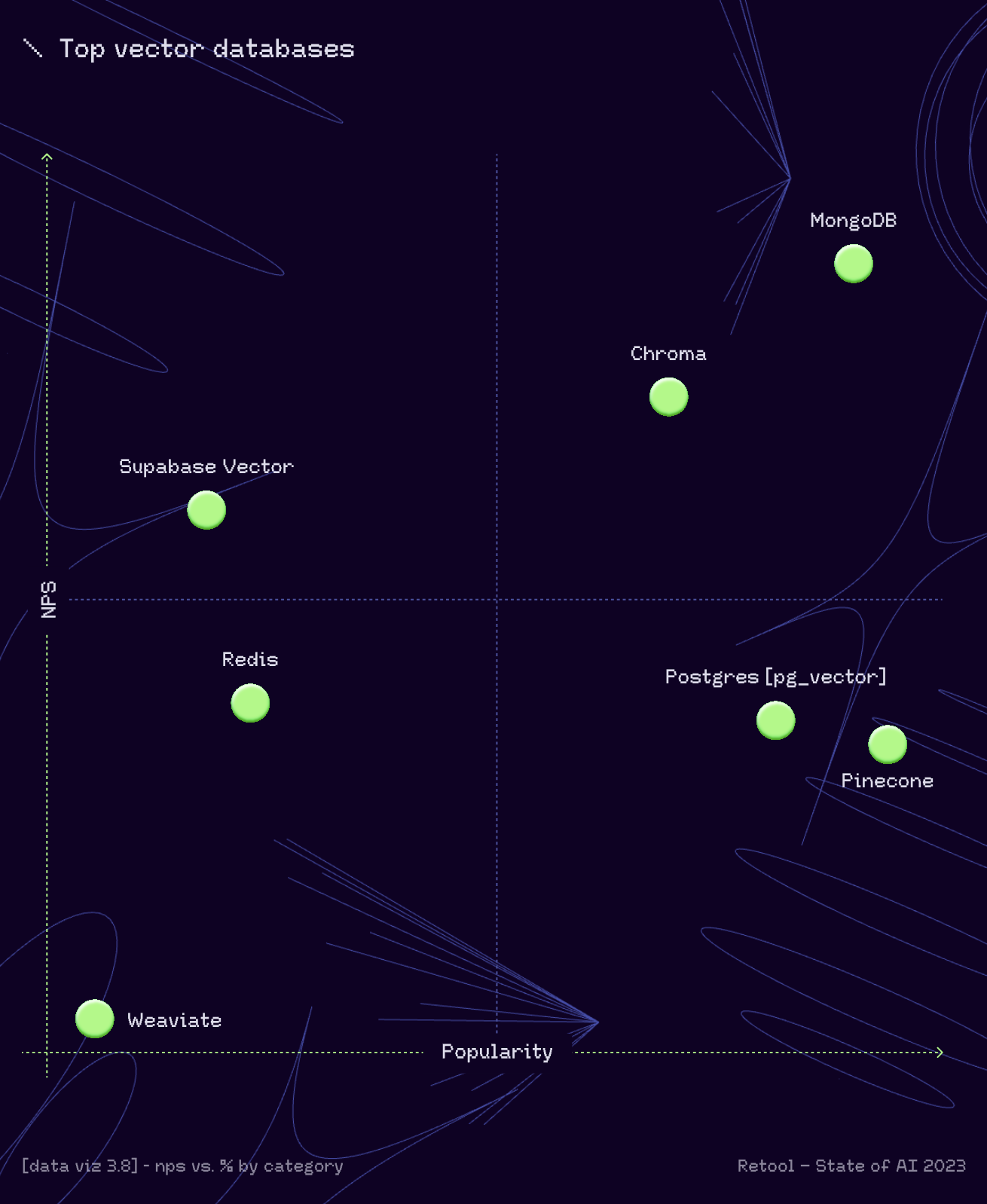

As a part of the survey, Retool dug into which tools were popular, including the vector databases used most frequently with AI. The survey found MongoDB Atlas Vector Search commanded the highest Net Promoter Score (NPS) and was the second most widely used vector database - within just five months of its release. This places it ahead of competing solutions that have been around for years.

In this blog post, we’ll examine the phenomenal rise of vector databases and how developers are using solutions like Atlas Vector Search to build AI-powered applications. We’ll also cover other key highlights from the Retool report.

Check out our AI learning hub to learn more about building AI-powered apps with MongoDB.

Vector database adoption: Off the charts (well almost...)

From mathematical curiosity to the superpower behind generative AI and LLMs, vector embeddings and the databases that manage them have come a long way in a very short time.

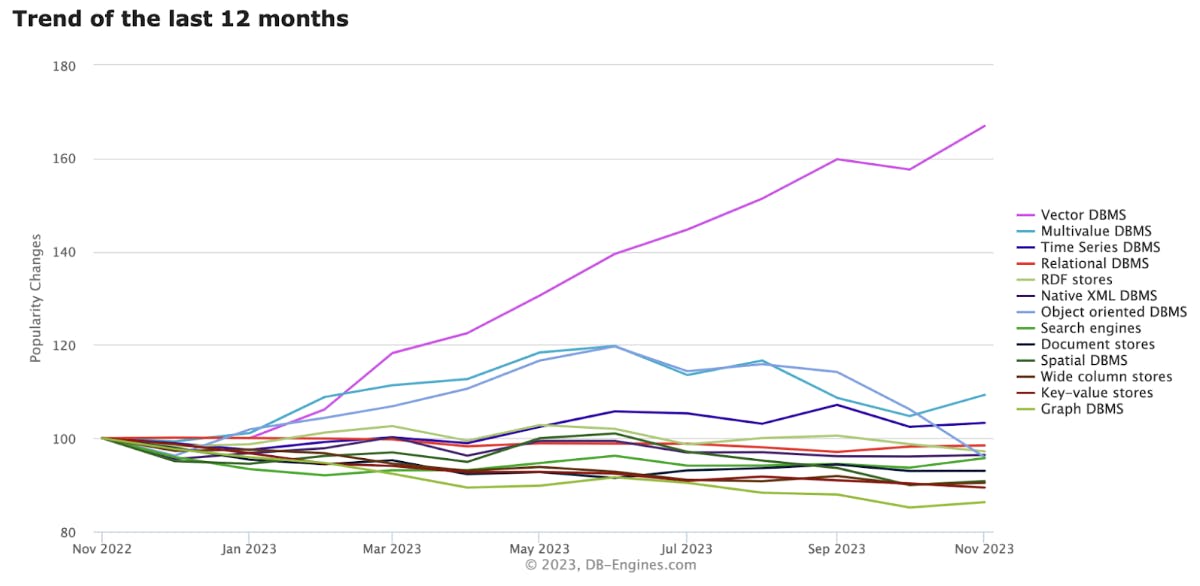

Check out DB-Engines trends in database models over the past 12 months and you'll see that vector databases are head and shoulders above all others in popularity change. Just look at the pink line’s "up and to the right" trajectory in the chart below.

But why have vector databases become so popular?

They are a key component in a new architectural pattern called retrieval-augmented generation — otherwise known as RAG — a potent mix that combines the reasoning capabilities of pre-trained, general-purpose LLMs and feeds them real-time, company-specific data. The results are AI-powered apps that uniquely serve the business — whether that’s creating new products, reimagining customer experiences, or driving internal productivity and efficiency to unprecedented heights.

Vector embeddings are one of the fundamental components required to unlock the power of RAG. Vector embedding models encode enterprise data, no matter whether it is text, code, video, images, audio streams, or tables, as vectors. Those vectors are then stored, indexed, and queried in a vector database or vector search engine, providing the relevant input data as context to the chosen LLM. The result are AI apps grounded in enterprise data and knowledge that is relevant to the business, accurate, trustworthy, and up-to-date.

As the Retool survey shows, the vector database landscape is still largely greenfield. Fewer than 20% of respondents are using vector databases today, but with the growing trend towards customizing models and AI infrastructure, adoption is guaranteed to grow.

Why are developers adopting Atlas Vector Search?

Retool's State of AI survey features some great vector databases that have blazed a trail over the past couple of years, especially in applications requiring context-aware semantic search. Think product catalogs or content discovery.

However, the challenge developers face in using those vector databases is that they have to integrate them alongside other databases in their application’s tech stack.

Every additional database layer in the application tech stack adds yet another source of complexity, latency, and operational overhead. This means they have another database to procure, learn, integrate (for development, testing, and production), secure and certify, scale, monitor, and back up, And this is all while keeping data in sync across these multiple systems.

MongoDB takes a different approach that avoids these challenges entirely:

Developers store and search native vector embeddings in the same system they use as their operational database.

Using MongoDB’s distributed architecture, they can isolate these different workloads while keeping the data fully synchronized.

Search Nodes provide dedicated compute and workload isolation that is vital for memory-intensive vector search workloads, thereby enabling improved performance and higher availability

With MongoDB’s flexible and dynamic document schema, developers can model and evolve relationships between vectors, metadata, and application data in ways other databases cannot.

They can process and filter vector and operational data in any way the application needs with an expressive query API and drivers that support all of the most popular programming languages.

Using the fully managed MongoDB Atlas modern database empowers developers to achieve the scale, security, and performance that their application users expect.

What does this unified approach mean for developers? Faster development cycles, higher performing apps providing lower latency with fresher data, coupled with lower operational overhead and cost. Outcomes that are reflected in MongoDB’s best-in-class NPS score.

Atlas Vector Search is robust, cost-effective, and blazingly fast!

”Check out our Building AI with MongoDB blog series (head to the Getting Started section to see the back issues). Here you'll see Atlas Vector Search used for GenAI-powered applications spanning conversational AI with chatbots and voicebots, co-pilots, threat intelligence and cybersecurity, contract management, question-answering, healthcare compliance and treatment assistants, content discovery and monetization, and more.

MongoDB was already storing metadata about artifacts in our system. With the introduction of Atlas Vector Search, we now have a comprehensive vector-metadata database that’s been battle-tested over a decade and that solves our dense retrieval needs. No need to deploy a new database we'd have to manage and learn. Our vectors and artifact metadata can be stored right next to each other.

”What can you learn about the state of AI from the Retool report?

Beyond uncovering the most popular vector databases, the survey covers AI from a range of perspectives. It starts by exploring respondents' perceptions of AI. (Unsurprisingly, the C-suite is more bullish than individual contributors.) It then explores investment priorities, AI’s impact on future job prospects, and how it will likely affect developers and the skills they need in the future.

The survey then explores the level of AI adoption and maturity. Over 75% of survey respondents say their companies are making efforts to get started with AI, with around half saying these were still early projects, and mainly geared towards internal applications. The survey goes on to examine what those applications are, and how useful the respondents think they are to the business. It finds that almost everyone’s using AI at work, whether they are allowed to or not, and then identifies the top pain points. It's no surprise that model accuracy, security, and hallucinations top that list.

The survey concludes by exploring the top models in use. Again no surprise that Open AI’s offerings are leading the way, but it also indicates growing intent to use open source models along with AI infrastructure and tools for customization in the future.

You can dig into all of the survey details by reading the report.

Getting started with Atlas Vector Search

Eager to take a look at our Vector Search offering? Head over to our Atlas Vector Search product page. There you will find links to tutorials, documentation, and key AI ecosystem integrations so you can dive straight into building your own genAI-powered apps.

If you want to learn more about the high level possibilities of Vector Search, then download our Embedding Generative AI whitepaper.

Head over to our quick-start guide to get started with Atlas Vector Search today.

Check out our presentation Build Your AI Roadmap for 2024 to learn about different AI use cases and how organizations are making changes to support them!