As the digital landscape evolves, developers are constantly on the lookout for innovative ways to optimize their applications and deliver seamless user experiences. One approach that has gained popularity over the years is serverless architecture. By abstracting away server management and scaling concerns, serverless promises increased development efficiency, reduced operational overhead, and potential cost savings.

However, before diving headfirst into this paradigm shift, it's crucial to understand the tradeoffs and costs associated with serverless architecture to know if it’s the right fit for your use case and budget requirements.

What is serverless architecture?

Let's first briefly review what serverless architecture entails. In traditional setups, developers manage servers, infrastructure provisioning, and scaling. By contrast, serverless architecture allows developers to focus solely on the business logic for their applications without worrying about the underlying infrastructure. Instead, the service providers handle the server provisioning and scaling dynamically based on the application's demand.

There are a variety of technologies and services that now fit the serverless model, including function-as-a-service (FaaS), API gateways, object storage, and even databases.

Understanding the cost model of serverless

When it comes to pricing, serverless solutions follow a usage-based pricing model where you “only pay for what you use”. This means, instead of fixed monthly fees for maintaining servers, you only pay for the actual computing resources used during the execution of your code. The primary cost factors for serverless solutions can vary slightly by service but they all typically meter on some form of the following:

Compute resources: The compute needed to execute and service your application workload.

Memory or storage allocation: The amount of memory allocated or overall data size being stored.

Data transfer: The data is transferred in and out.

Cost comparison: Serverless vs. provisioned infrastructure

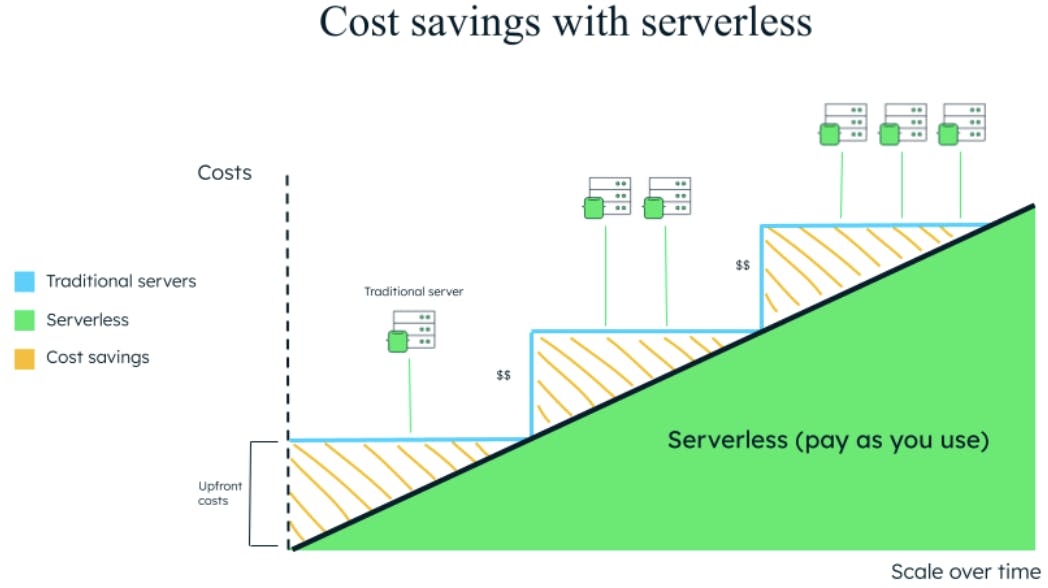

To determine whether serverless will save you money, you must evaluate your application's specific requirements and usage patterns. Serverless architecture can be cost-effective in certain scenarios, but it might not be the optimal choice for every use case.

Generally, with traditional provisioned infrastructure, you are going to have to deal with initial upfront costs even before there is traffic to your application. Which means you will likely have much more capacity than you need to operate. The same cycle is repeated over time as your application grows and requires more resources to scale – you scale up to a server that is much more than you actually need. Serverless on the other hand removes the upfront cost and the risk of over-provisioning for your workload requirements, since it will simply scale as needed and you will only pay for what you use.

However, not all applications scale linearly, so for both new and more established applications where you may be considering using serverless, it’s important to think about your usage patterns and requirements before going down this path.

Here's a breakdown of cost considerations depending on your applications requirements and traffic patterns:

Low and Variable Workloads: If your application experiences irregular traffic patterns or low user demand, serverless can be highly cost-effective. You won't have to pay for idle server time, as the service provider automatically scales down to zero when there's no traffic.

High Burst Traffic: Serverless excels in handling sudden spikes in traffic. Provisioned infrastructure may require overprovisioning to handle peak loads, incurring unnecessary costs during normal usage.

Predictable Workloads: In cases of steady, predictable workloads, provisioned infrastructure with reserved instance capacity might be more cost-effective than serverless.

Short-Lived Tasks: For tasks that execute quickly and don't require significant resources, serverless can be more cost-efficient. provisioned servers might incur higher costs due to minimum capacity or billing requirements.

Long-Running Tasks: If your application frequently executes tasks that run for extended periods, serverless may end up being more expensive in the long run. In these scenarios, provisioned infrastructure may be the more cost-effective option.

Optimizing costs in serverless architecture

Because serverless solutions are charged based on usage, ensuring you have proper optimizations in place is not only important for performance but also to keep costs as low as possible. It’s important to make sure you are considering best practices for implementation so the service runs smoothly and can scale as efficiently as possible.

This can mean different things depending on the type of serverless service you are using. If you are using a function-as-a-service platform like AWS Lambda that may mean allocating the right amount of memory for your function or controlling the invocation frequency to minimize invocations. Regardless of the service, you should familiarize yourself with any best practices before jumping right in.

Choosing the right solution for your needs

Serverless architecture offers developers a powerful way to streamline development and focus on building applications without worrying about infrastructure management - providing benefits far beyond cost savings alone. For certain use cases with varying workloads and short-lived tasks, serverless can indeed be an option to save you money. However, it's crucial to assess your application's specific requirements and usage patterns to determine if serverless is the right fit for your needs.

By understanding the cost model, comparing it with provisioned infrastructure, and implementing the proper cost optimization strategies, you can make an informed decision that aligns with your development goals and budget.

Get started with MongoDB Atlas

MongoDB Atlas gives developers flexibility to address your workload requirements, regardless of your app's traffic patterns or budget constraints.