Thermo Fisher & MongoDB: Moving Apps to the Cloud with MongoDB & AWS

December 8, 2016 | Updated: April 23, 2026

Biotechnology giant uses MongoDB Atlas and an assortment of AWS technologies and services to reduce experiment times from days to minutes.

Thermo Fisher (NYSE: TMO) is moving its applications to the public cloud as part of a larger Thermo Fisher Cloud initiative with the help of offerings such as MongoDB Atlas and Amazon Web Services. Last week, our CTO & Cofounder Eliot Horowitz presented at AWS re:Invent with Thermo Fisher Senior Software Architect Joseph Fluckiger on some of the transformative benefits they’re seeing internally and across customers. This recap will cover Joseph’s portion of the presentation.

Joseph started by telling the audience that Thermo Fisher is maybe the largest company they’d never heard of. Thermo Fisher employs over 51,000 people across 50 countries, with over $17 billion in revenues in 2015. Formed a decade ago through the merger of Thermo Electron & Fisher Scientific, it is one of the leading companies in the world in the genetic testing and precision laboratory equipment markets.

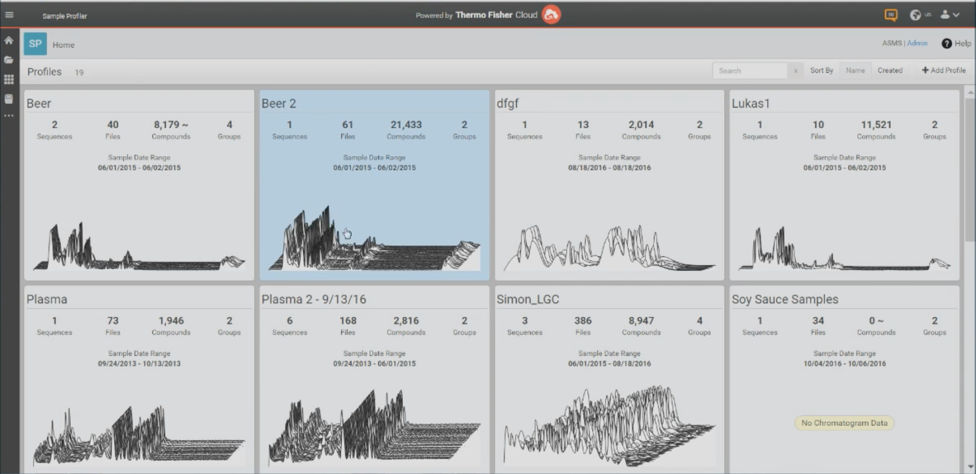

The Thermo Fisher Cloud is a new offering built on Amazon Web Services consisting of 35 applications supported by over 150 Thermo Fisher developers. It allows customers to streamline their experimentation, processing, and collaboration workflows, fundamentally changing how researchers and scientists work. It serves 10,000 unique customers and stores over 1.3 million experiments, making it one of the largest cloud platforms for the scientific community. For internal teams, Thermo Fisher Cloud has also streamlined development workflows, allowing developers to share more code and create a consistent user experience by taking advantage of a microservices architecture built on AWS.

One of the precision laboratory instruments the company produces is a mass spectrometer, which works by taking a sample, bombarding it with electrons, and separating the ions by accelerating the sample and subjecting it to an electric or magnetic field. Atoms within the sample are then sorted by mass and charge and matched to known values to help customers figure out the exact composition of the sample in question. Joseph’s team develops the software powering these machines.

Thermo Fisher mass spectrometers are used to:

- Detect pesticides & pollutants — anything that’s bad for you

- Identify organic molecules on extraplanetary missions

- Process samples from athletes to look for performance-enhancing substances

- Drive product authenticity tests

- And more

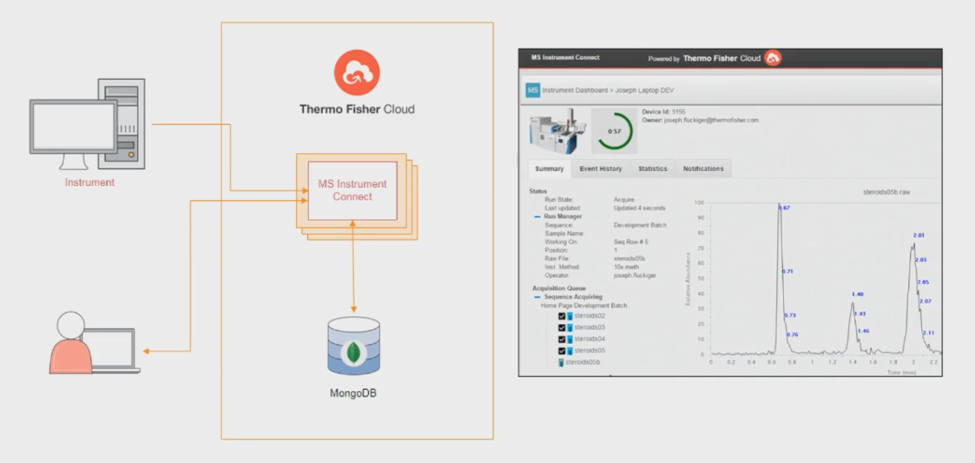

During the presentation, Joseph showed off one application in the Thermo Fisher Cloud called MS Instrument Connect, which allows customers to see the status of their spectrometry instruments with live experiment results from any mobile device or browser. No longer does a scientist have to sit at the instrument to monitor an ongoing experiment. MS Instrument Connect also allows Thermo Fisher customers to easily query across instruments and get utilization statistics. Supporting MS Instrument Connect and marshalling data back and forth is a MongoDB cluster deployed in MongoDB Atlas, our hosted database as a service.

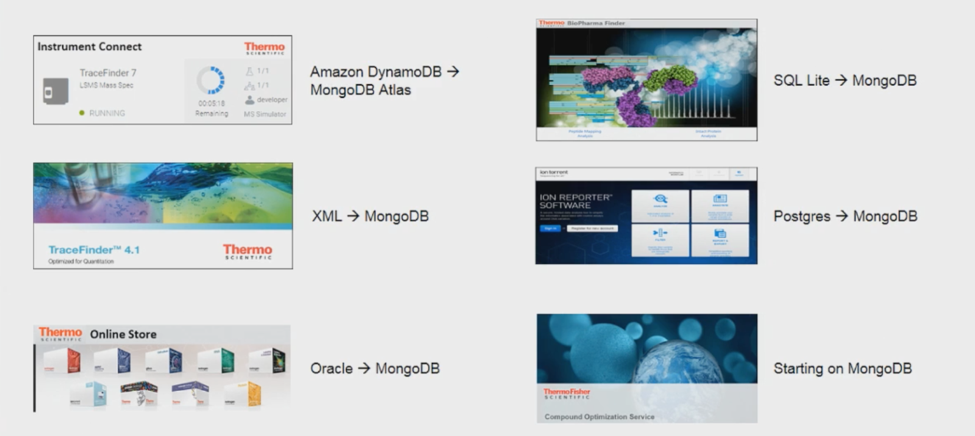

Joseph shared that MongoDB is being used across multiple projects in Thermo Fisher and the Thermo Fisher Cloud, including Instrument Connect, which was originally deployed on DynamoDB. Other notable applications include the Thermo Fisher Online Store (which was migrated from Oracle), Ion Reporter (which was migrated from PostgreSQL), and BioPharma Finder (which is being migrated from SQL Lite).

To support scientific experiments, Thermo Fisher needed a database that could easily handle a wide variety of fast-changing data and allow its customers to slice and dice their data in many different ways. Experiment data is also very large; each experiment produces millions of “rows” of data. When explaining why MongoDB was chosen for such a wide variety of use cases across the organization, Joseph called the database a “swiss army knife” and cited the following characteristics:

- High performance

- High flexibility

- Ability to improve developer productivity

- Ability to be deployed in any environment, cloud or on premises

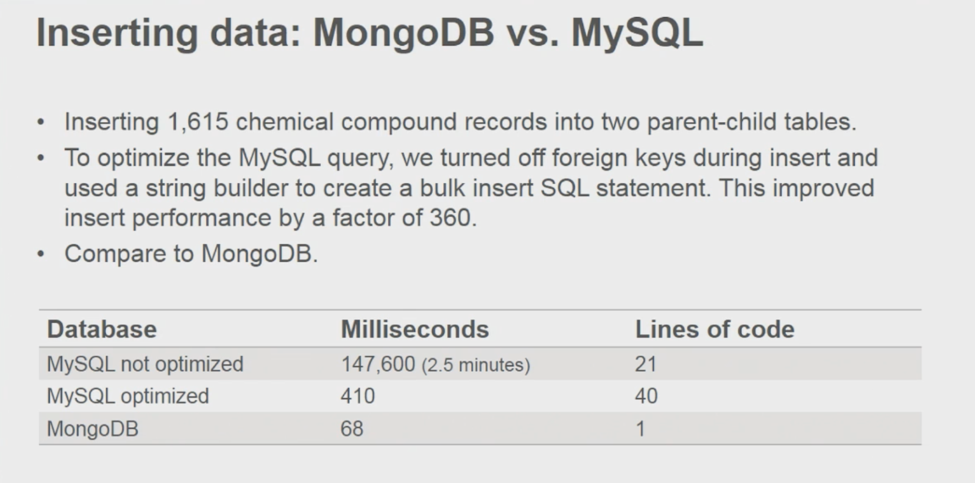

What really got the audience’s attention was a segment where Joseph compared incumbent databases that Thermo Fisher had been using with MongoDB.

MongoDB compared to MySQL (Aurora)

If I were to reduce my slides down to one, this would be that slide,” Joseph stated, “This is absolutely phenomenal. What we did was we inserted data into MongoDB & Aurora and with only 1 line of code, we were able to beat the performance of MySQL.

In additional to delivering 6x higher performance with 40x less code, MongoDB also helped reduce the schema complexity of the app.

MongoDB compared to SQL Lite

For the mass spectrometry application used in performance enhancing drug testing, Thermo Fisher rewrote the data layer from SQL Lite to MongoDB and reduced their code by a factor of about 3.5.

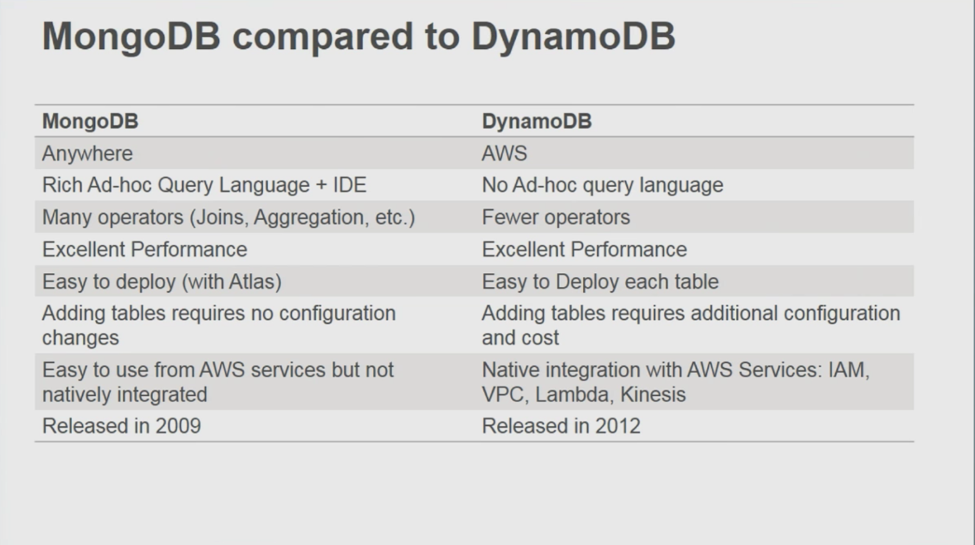

MongoDB compared to DynamoDB

Joseph then compared MongoDB to DynamoDB, stating that while both databases are great and easy to deploy, MongoDB offers a more powerful query language for richer queries to be run and allows for much simpler schema evolution. He also reminded the audience that MongoDB can be run in any environment while DynamoDB can only be run on AWS.

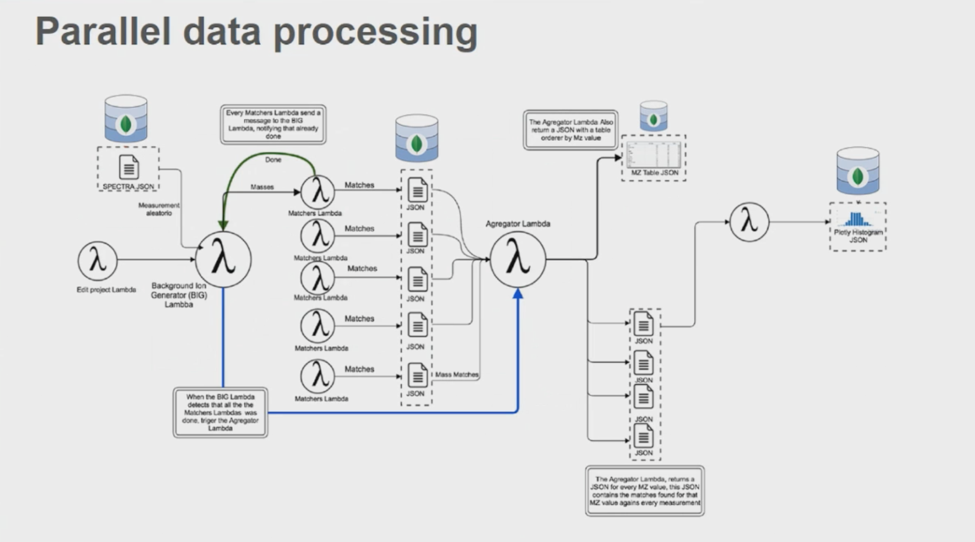

Finally, Joseph showed an architecture diagram showing how MongoDB is being used with several AWS technologies and services (including AWS Lambda, Docker, & Apache Spark) to parallelize algorithms and significantly reduce experiment processing times.

He concluded his presentation by explaining why Thermo Fisher is pushing applications to MongoDB Atlas, citing its ease of use, the seamless migration process, and how there has been no downtime, even when reconfiguring the cluster. The company began testing MongoDB Atlas around its release date in early July and began launching production applications on the service in September. With the time Thermo Fisher team is saving by using MongoDB Atlas (that would have otherwise been spent on writing and optimizing their data layer), they’re able to invest more time in improving their algorithms, their customer experience, and their processing infrastructure.

Anytime I can use a service like MongoDB Atlas, I’m going to take that so that we at Thermo Fisher can focus on what we’re good at, which is being the leader in serving science.

To view Joseph & Eliot’s AWS re:Invent presentation in its entirety, click here.

--