In today’s rapidly evolving manufacturing landscape, digital twins of factory processes have emerged as a game-changing technology. But why are they so important? Digital twins serve as virtual replicas of physical manufacturing processes, allowing organizations to simulate and analyze their operations in a virtual environment. By incorporating artificial intelligence and machine learning, organizations can interpret and classify objects, leading to cost reductions, faster throughput speeds, and improved quality levels. Real-time data, especially inventory information, plays a crucial role in these virtual factories, providing up-to-the-minute insights for accurate simulations and dynamic adjustments.

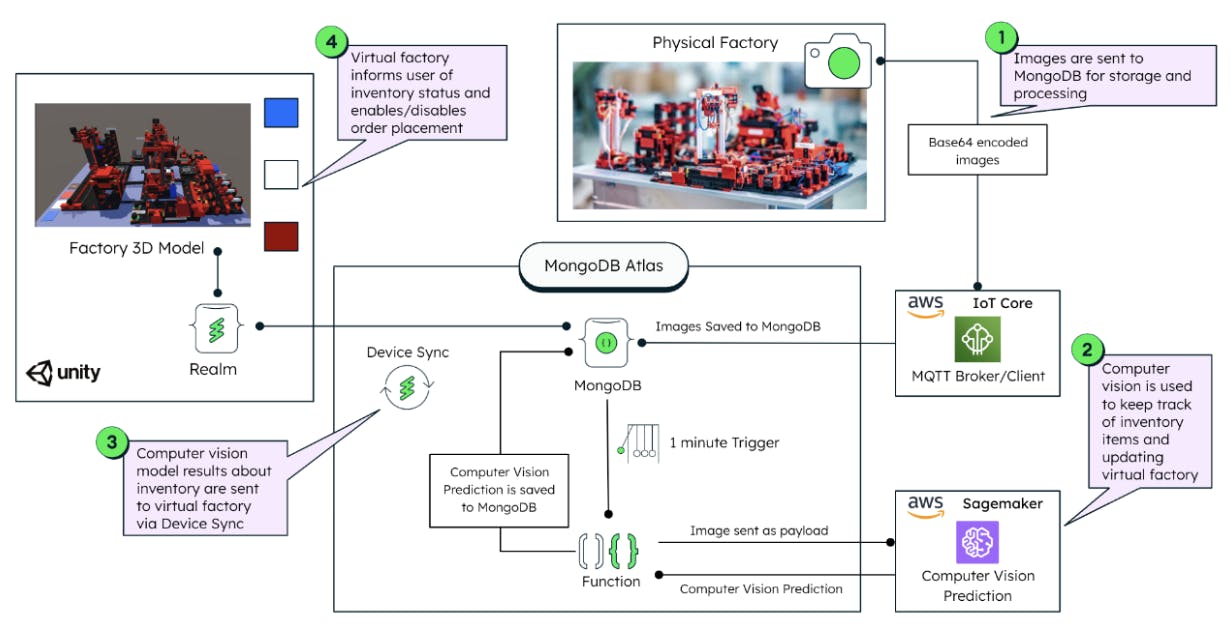

In the first blog, we covered a 5-step high level plan to create a virtual factory. In this blog, we delve into the technical aspects of implementing a real-time computer vision inventory inference solution as seen in Figure 1 below. Our focus will be on connecting a physical factory with its digital twin using MongoDB Atlas, which facilitates real-time interaction between the physical and digital realms. Let's get started!

Part 1: The physical factory sends data to MongoDB Atlas

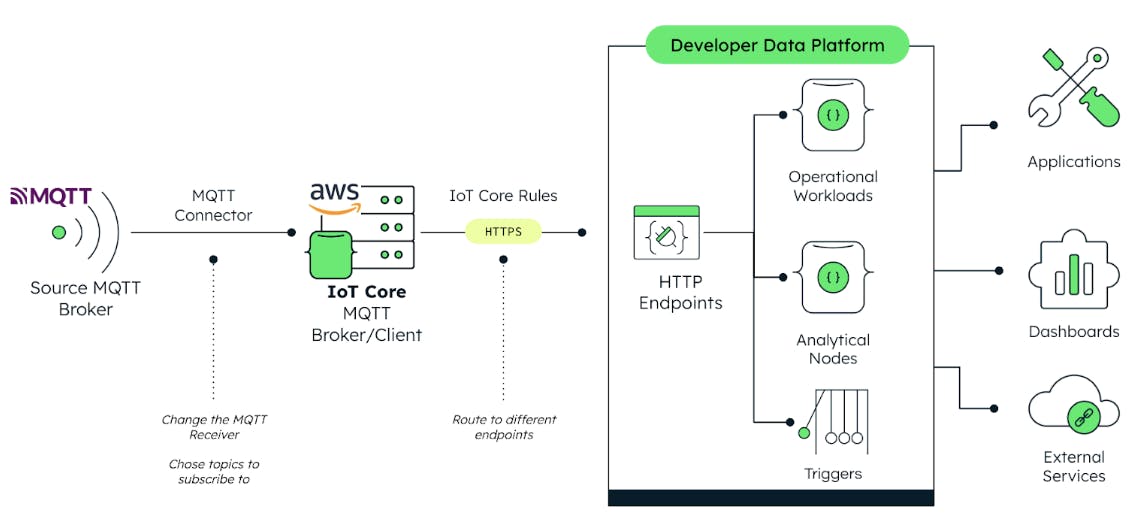

Let’s start with the first task of transmitting data from the physical factory to MongoDB Atlas. Here, we focus on sending captured images of raw material inventory from the factory to MongoDB for storage and further processing as seen in Figure 2. Using the MQTT protocol, we send images as base64 encoded strings. AWS IoT Core serves as our MQTT broker, ensuring secure and reliable image transfer from the factory to MongoDB Atlas.

For simplicity purposes, in this demo, we directly store the base64 encoded image strings in MongoDB documents. This is because each image received from the physical factory is small enough to fit into one document. However, this is not the only method to work with images (or generally large files) in MongoDB. Within our modern database, we have various storage methods, including GridFS for larger files or binary data for smaller ones (less than 16MB). Moreover, object storage services like AWS S3 or Google Cloud Storage, coupled with MongoDB data federation are commonly used in production scenarios.

In this real-world scenario, integrating object storage services with MongoDB provides a scalable and cost-efficient architecture. MongoDB is excellent for fast and scalable reads and writes of operational data, but when retrieving images with very low latency is not a priority, the storage of these large files in ‘buckets’ helps reduce costs while getting all the benefits of working with MongoDB Atlas. Robert Bosch GmbH, for instance, uses this architecture for Bosch's IoT Data Storage, which helps service millions of devices worldwide efficiently.

Coming back to our use case, to facilitate communication between AWS IoT Core and MongoDB, we employ Rules defined in AWS IoT Core, which helps us send data to an HTTPS endpoint. This endpoint is configured directly in MongoDB Atlas and allows us to receive and process incoming data. If you want to learn more about MongoDB Data APIs, check this blog from our Developer Center colleagues.

Part 2: MongoDB Atlas to AWS SageMaker for CV prediction

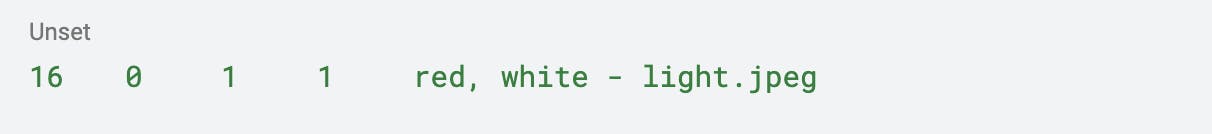

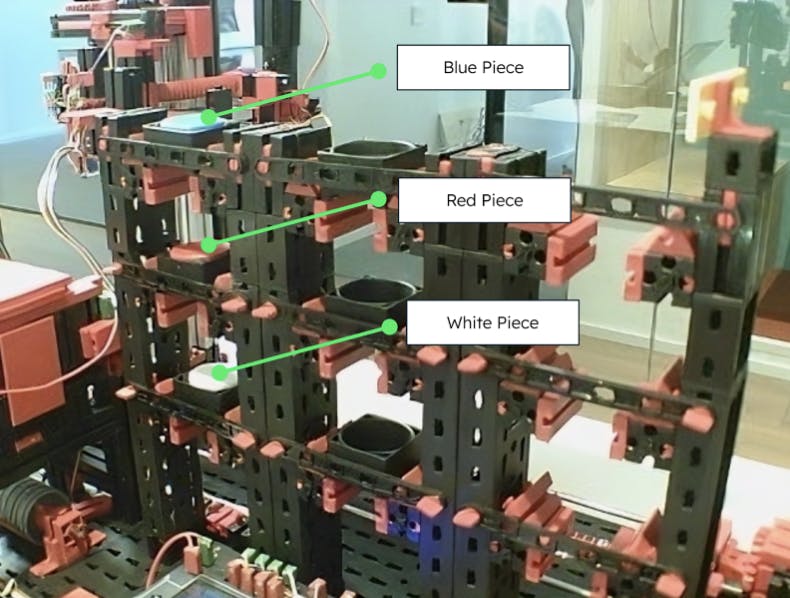

Now it’s time for the inference part! We’ve trained a built-in multi-label classification model provided by Sagemaker, using images like in Figure 3. The images were annotated with using an .lst file format following the schema:

So in an image where only the red and white pieces are present, but no blue is present in the warehouse, we would have an annotation such as:

The model was built using 24 training images and 8 validation images, which was a simplicity-based decision to demonstrate the capabilities of the implementation rather than building a powerful model. Regardless of the extremely low training/validation sample, we managed to achieve a 0.97 validation accuracy.

With a model trained and ready to predict, we created a model endpoint in Sagemaker where we send new images through a POST request so it answers back with the predicted values.

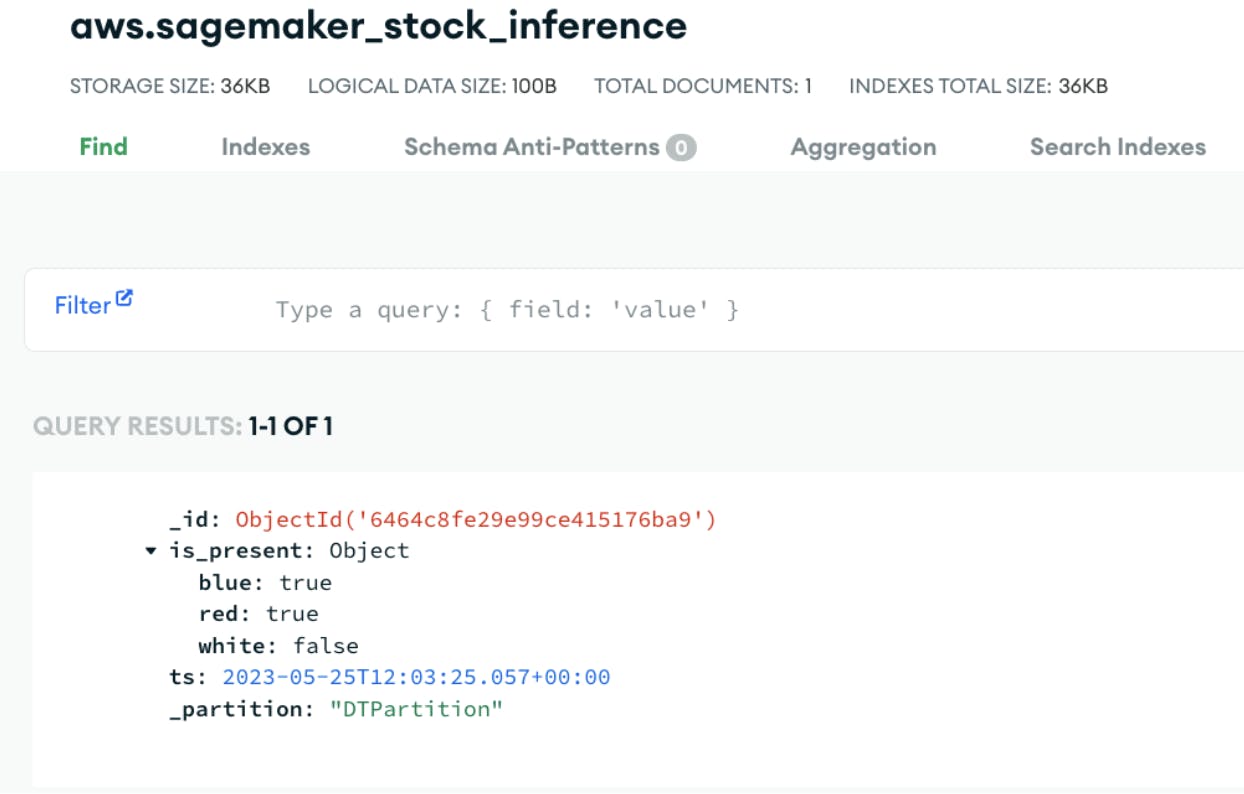

We use an Atlas Function to drive this functionality. Every minute, it grabs the latest image stored in MongoDB and sends it to the Sagemaker endpoint. It then waits for the response. When the response is received, we get an array with three decimal values between 0 and 1 representing the likelihood of each piece (blue, red, white) being in the stock. We interpret the numeric values with a simple rule: if the value is above 0.85, we consider the piece being in stock. Finally, the same Atlas function writes the results in a collection (Figure 4) that keeps the current state of the inventory of the physical factory.

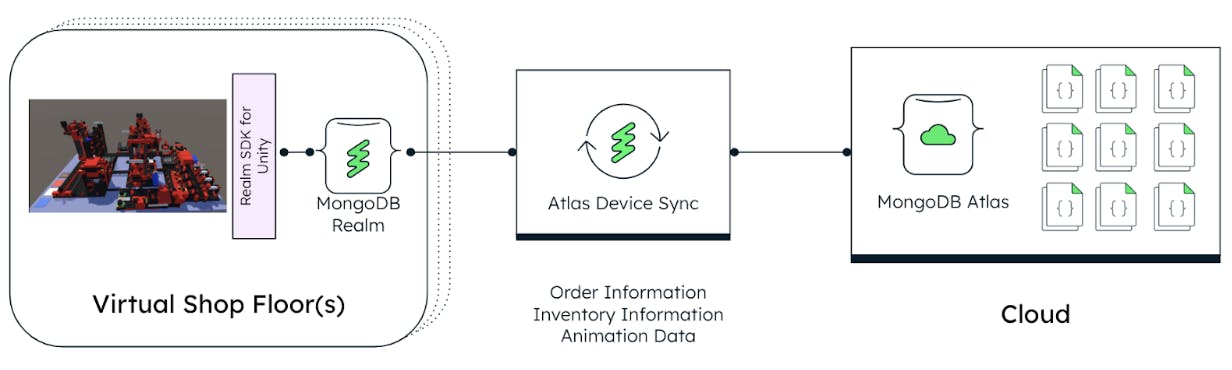

The beauty comes when we have MongoDB Realm incorporated on the Virtual Factory as seen in Figure 5. It’s automatically and seamlessly synced with MongoDB Atlas through Device Sync. The moment we update the collection with the inventory status of the physical factory in MongoDB Atlas, the virtual factory, with Realm, is automatically updated. The advantage here, besides not needing to include any additional lines of code for the data transfer, is that conflict resolution will be handled out of the box and when connection is lost, the data won’t be lost and rather updated as soon as the connection is re-established. This essentially enables a real-time synchronized digital twin without the hustle of managing data pipelines, configuring your code for edge cases and lose time in non-competitive work.

Just as an example of how companies are implementing Realm and Device Sync for mission-critical applications: The airline Cathay Pacific revolutionized how pilots logged critical flight data such as wind speed, elevation, and oil pressure. Historically, it was done manually via pen and paper until they switched to a fully digital, tablet-based app with MongoDB, Realm, and Device Sync. With this, they eliminated all papers from flights and did one of the first zero-paper flights in the world in 2019. Check out the full article here.

As you can see, the combination of these technologies is what enables the development of truly connected, highly performant digital twins within just one platform.

Part 3: CV results are sent to Digital Twin via Device Sync

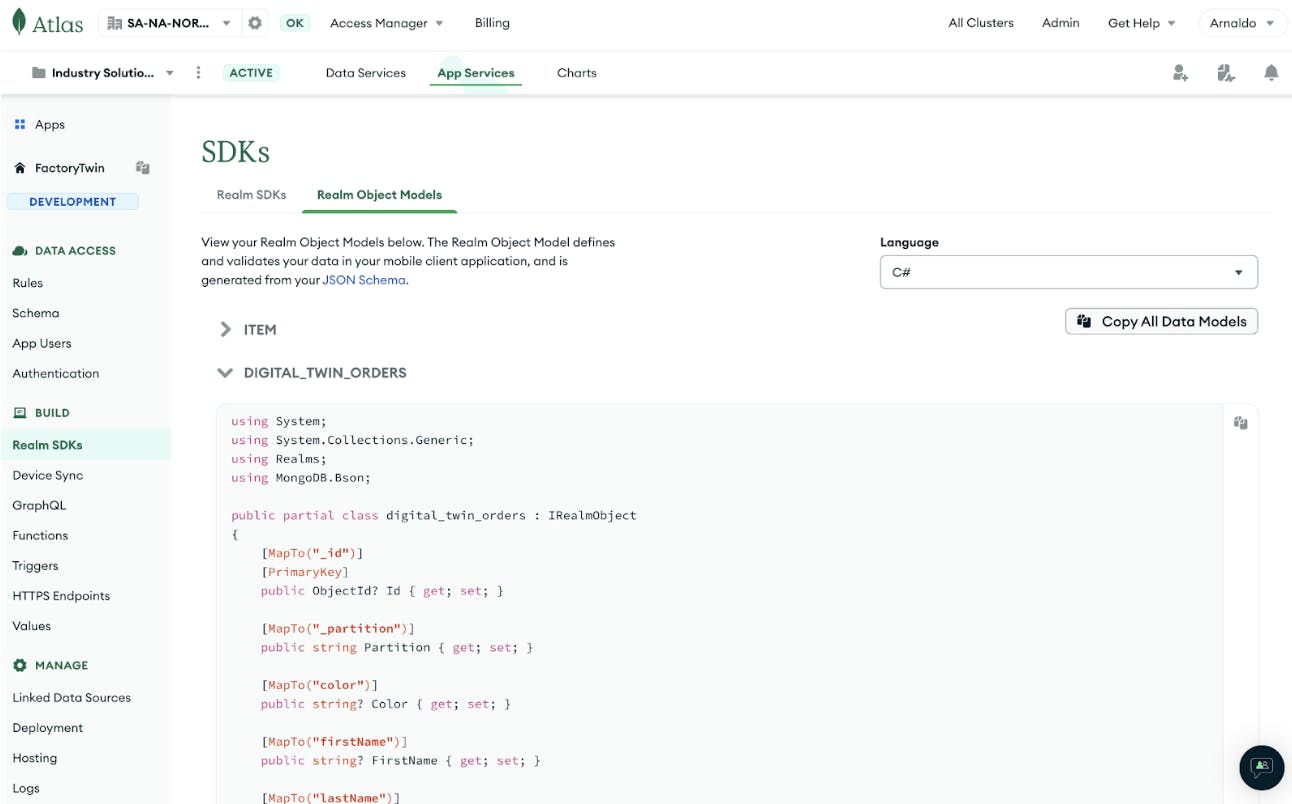

In the process of sending data to the digital twin through device sync, developers can follow a straightforward procedure. First, we need to navigate to Atlas and access the Realm SDK section. Here, they can choose their preferred programming language and the data models will be automatically pre-built based on the schemas defined in the MongoDB collections. MongoDB Atlas simplifies this task by offering a copy-paste functionality as seen in Figure 6 , eliminating the need to construct data models from scratch. For this specific project, the C# SDK was utilized. However, developers have the flexibility to select from various SDK options, including Kotlin, C++, Flutter, and more, depending on their preferences and project requirements. Once the data models are in place, simply activating device sync completes the setup. This enables seamless bidirectional communication. Developers can now send data to their digital twin effortlessly.

One of the key advantages of using device sync is its built-in conflict resolution capability. Whether facing offline interruptions or any conflicting changes, MongoDB Atlas automatically manages conflict resolution. The "Always on '' feature is particularly crucial for Digital Twins, ensuring constant synchronization between the device and the MongoDB Atlas. This powerful feature saves developers significant time that would otherwise be spent on building custom conflict resolution mechanisms, error-handling functions, and connection-handling methods. With device sync handling conflict resolution out of the box, developers can focus on building and improving their applications. They can be confident in the seamless synchronization of data between the digital twin and MongoDB Atlas.

Part 4: Virtual factory sends inventory status to the user

For this demonstration, we built the Digital Twin of our physical factory using Unity so that it can be interactive through a VR headset. With this, the user can order a piece on the physical world by interacting with the Virtual Twin, even if the user is thousands of miles away from the real factory.

In order to control the physical factory through the headset, it’s crucial that the app informs the user whether or not a piece is present in the stock, and this is where Realm and Device Sync come into play.

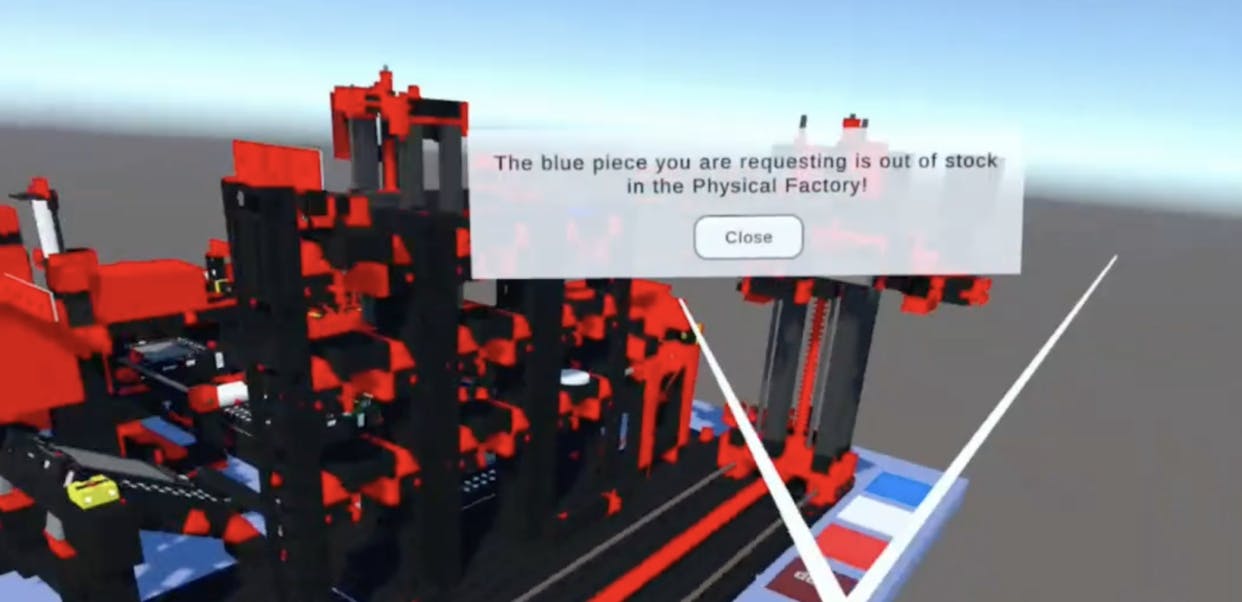

In Figure 7, the user intended to order a blue piece on the Digital Twin and the app is informing that the piece is not in stock, and therefore not activating the order neither on the physical factory nor its digital twin. What’s happening behind on the backend is that the app is reading the Realm object that stores the stock status of the physical factory and deciding if the piece is orderable or not. Remember that this Realm object is in real-time sync with MongoDB Atlas, which in turn is constantly updating the stock status on the collection in Figure 4 based on Sagemaker inferences.

Conclusion

In this blog, we presented a four-part process demonstrating the integration of a virtual factory and computer vision with MongoDB Atlas. This solution enables transformative real-time inventory management for manufacturing companies.