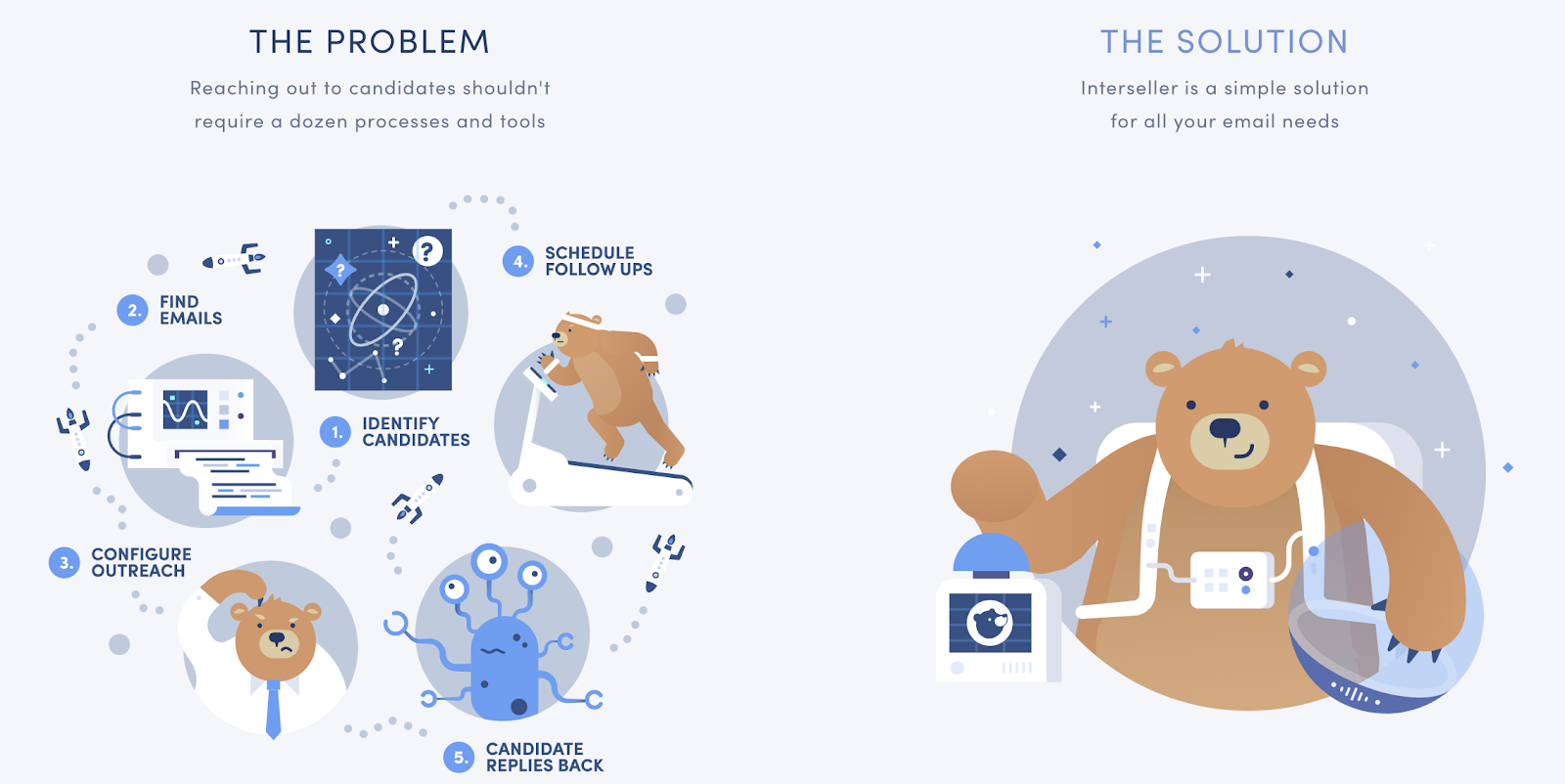

We all think our jobs are hard. But if you’re a recruiter, you know just how tough it is to place people into those jobs: the average response rate to recruiters is an abysmal 7%. Enter Interseller, a fast-growing NYC-based SaaS company in the recruiting tech space.

For this episode of #BuiltWithMongoDB, we go behind the scenes in recruiting technology with Steven Lu, co-founder and CEO of Interseller.

How did you pick this problem to work on?

While working as an engineer, I helped teach and recruit many other tech professionals. That’s when I realized that good engineers don’t find jobs. They get poached. Sourcing is essential for assembling great teams, but with the low industry response rate, I knew we needed a new solution.

I started looking into recruiting technology and was frankly surprised by how outdated the solutions were. We began by addressing three parts of sourcing:

- Research

- Outreach

- Data Management

To help us get started, Interseller went through Expa, Garrett Camp’s accelerator. We’ve been bootstrapping since then. We’re a team of 13, but we expect to grow to about 25 in another year.

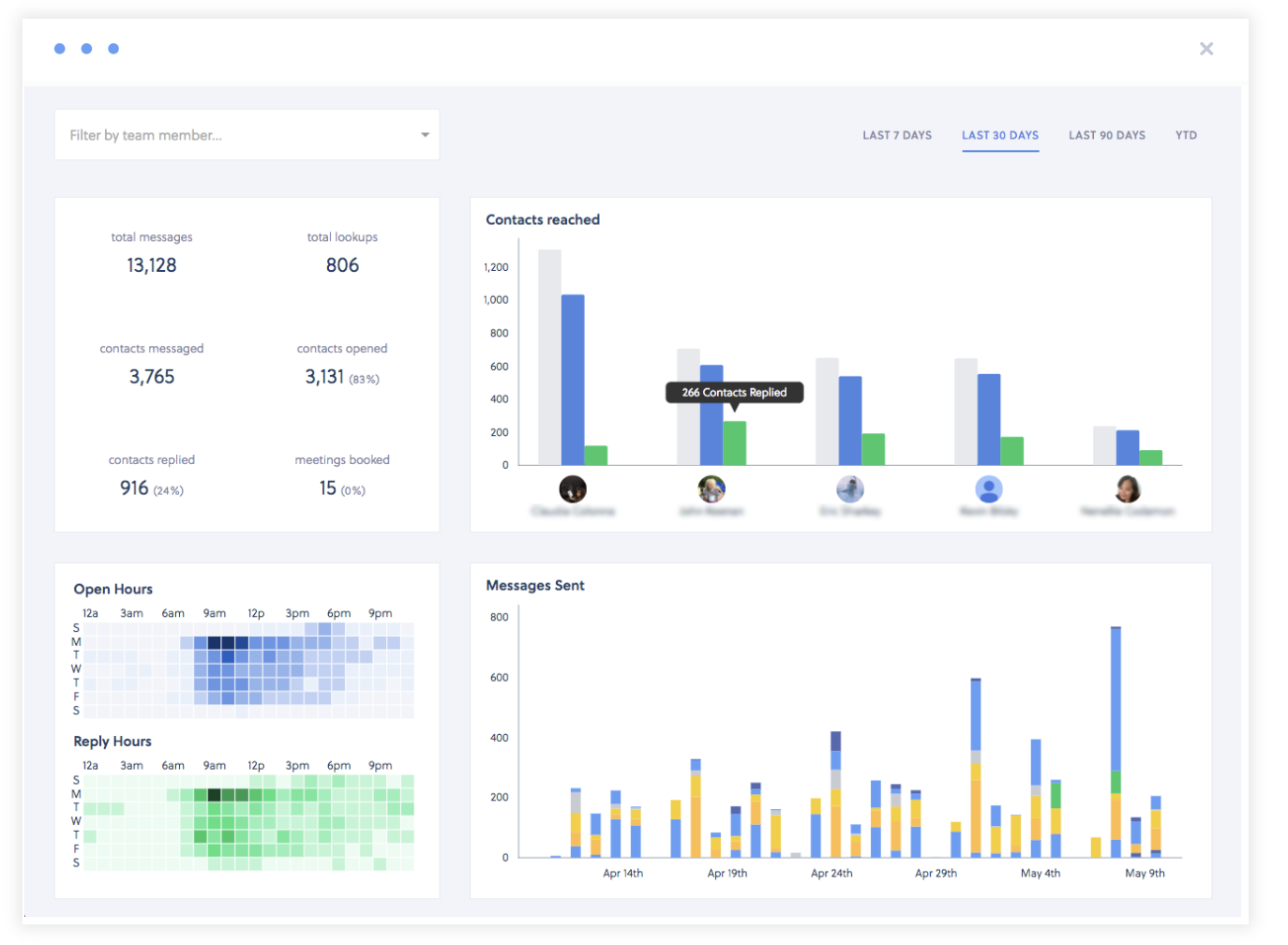

We serve about 4,000 recruiters, 75% of whom use us every single day. Some of our customers include Squarespace, Honey, and Compass. Overall, we have had about 2 million candidates respond to us, boosting our average response rate from the industry average of 7% to between 40% and 60%. We attempt to close candidates within 21 days.

How did you decide to have Interseller #BuiltWithMongoDB?

Like any engineer, I hate database migrations. I hate having to build around the database rather than the database building around my product. I remember using MongoDB at Compass in 2012—we were a MongoDB shop.

After that, I went to another company that was using SQL and a relational database and I felt we were constantly being blocked by database migrations. I had to depend on our CTO to run the database migration before I could merge anything. I have such bad memories from that experience. I would rather have my engineering team push things faster than have to wait on the database side.

MongoDB helped solve this. It worked well because it was so adaptable. I don’t know about scaling database solutions since we don’t have millions of users yet, but MongoDB has been a crucial part of getting core functionality, features, and bug fixes out much faster. Outside of MongoDB, we primarily use Node, Javascript, React, and AWS.

Our release schedule is really short: as a startup, you have to keep pumping things out, and if half your time is spent on database migration, you won’t be able to serve customers. That’s why MongoDB Atlas is so core to our business. It’s reliable, and I don’t have to deal with database versions.

Looking to build something cool? Get started with the MongoDB for Startups program.